yoloshii/ClawMem

On-device memory layer for AI agents. Claude Code, Hermes and OpenClaw. Hooks + MCP server + hybrid RAG search.

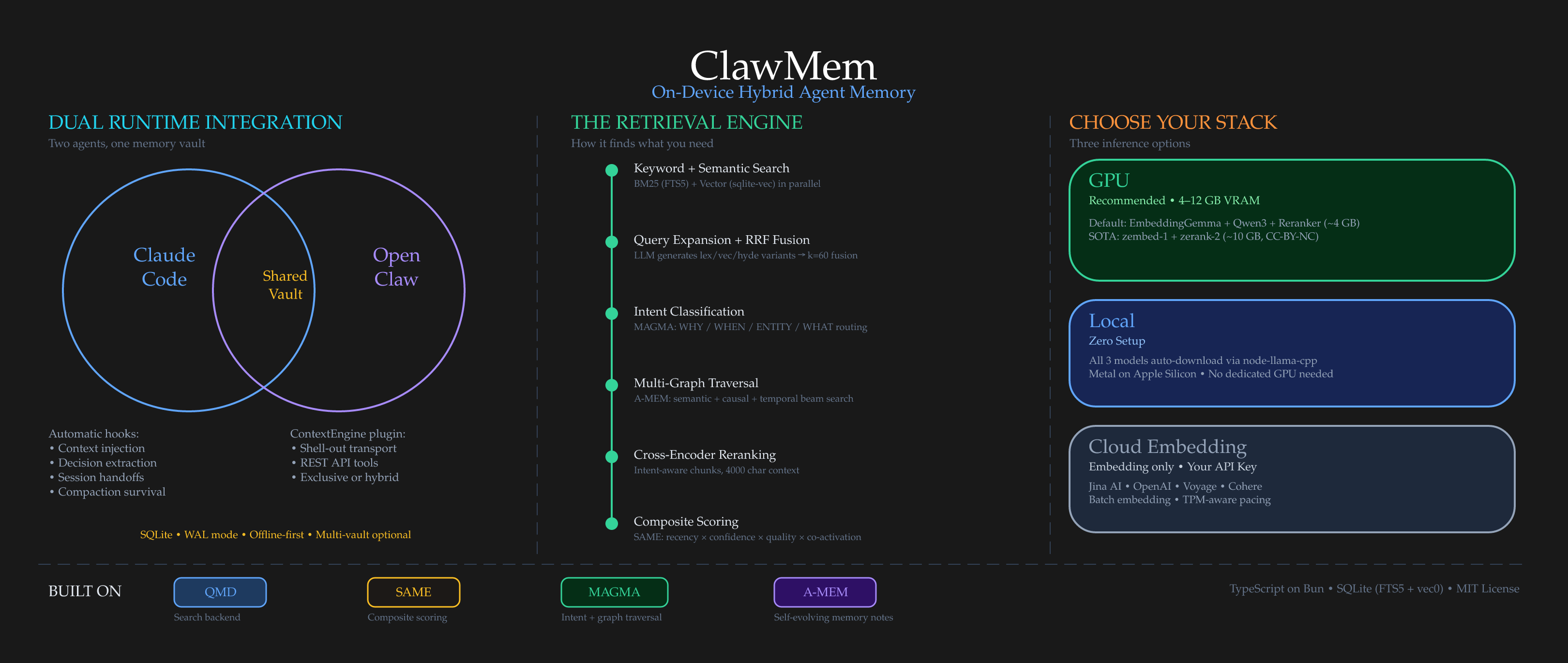

ClawMem is a local-first memory and context engine that provides AI agents with persistent, on-device knowledge. It utilizes a hybrid architecture combining multi-signal retrieval, intent classification, and multi-graph traversal to surface relevant context from markdown notes and session transcripts. The system integrates with Claude Code, OpenClaw, and Hermes agents via a shared SQLite vault. It enables agents to capture decisions, generate session handoffs, and maintain a self-evolving memory layer without cloud dependencies.

- Local-first architecture with no API keys or cloud dependencies

- Hybrid retrieval using BM25, vector search, and knowledge graphs

- Integrates via MCP server, Claude Code hooks, and agent plugins

full readme from github

ClawMem — On-device memory layer for Claude Code, OpenClaw, and Hermes agents

On-device memory for Claude Code, OpenClaw, Hermes, and AI agents. Retrieval-augmented search, hooks, and an MCP server in a single local system. No API keys, no cloud dependencies.

ClawMem fuses recent research into a retrieval-augmented memory layer that agents actually use. The hybrid architecture combines QMD-derived multi-signal retrieval (BM25 + vector search + reciprocal rank fusion + query expansion + cross-encoder reranking), SAME-inspired composite scoring (recency decay, confidence, content-type half-lives, co-activation reinforcement), MAGMA-style intent classification with multi-graph traversal (semantic, temporal, and causal beam search), and A-MEM self-evolving memory notes that enrich documents with keywords, tags, and causal links between entries. Pattern extraction from Engram adds deduplication windows, frequency-based durability scoring, and temporal navigation.

Integrates via Claude Code hooks, an MCP server (works with any MCP-compatible client), a native OpenClaw plugin, or a Hermes Agent MemoryProvider plugin. All paths write to the same local SQLite vault. A decision captured during a Claude Code session shows up immediately when an OpenClaw or Hermes agent picks up the same project.

TypeScript on Bun. MIT License.

What It Does

ClawMem turns your markdown notes, project docs, and research dumps into persistent memory for AI coding agents. It automatically:

- Surfaces relevant context on every prompt (context-surfacing hook)

- Bootstraps sessions with your profile, latest handoff, recent decisions, and stale notes

- Captures decisions, preferences, milestones, and problems from session transcripts using a local GGUF observer model

- Imports conversation exports from Claude Code, ChatGPT, Claude.ai, Slack, and plain text via

clawmem mine, with optional post-import LLM fact extraction (--synthesize) that pulls structured decisions / preferences / milestones / problems and cross-fact links out of otherwise full-text conversation dumps (v0.7.2) - Generates handoffs at session end so the next session can pick up where you left off

- Learns what matters via a feedback loop that boosts referenced notes and decays unused ones

- Guards against prompt injection in surfaced content

- Classifies query intent (WHY / WHEN / ENTITY / WHAT) to weight search strategies

- Traverses multi-graphs (semantic, temporal, causal) via adaptive beam search

- Evolves memory metadata as new documents create or refine connections

- Infers causal relationships between facts extracted from session observations

- Detects contradictions between new and prior decisions, auto-decaying superseded ones (with an additional merge-time contradiction gate in the consolidation worker that blocks cross-observation contradictions before they land, v0.7.1)

- Guards against cross-entity merges during consolidation — name-aware dual-threshold merge safety compares entity anchors before merging similar observations, preventing "Alice decided X" from merging into "Bob decided X" (v0.7.1)

- Prevents context bleed in derived insights — the Phase 3 deductive synthesis pipeline validates every draft against an anti-contamination wrapper (deterministic entity contamination check + LLM validator + dedupe) before writing cross-session deductive observations (v0.7.1)

- Frames surfaced facts as background knowledge —

context-surfacingwraps injected content in<instruction>+<facts>+<relationships>blocks, telling the model to treat facts as already-known and exposing memory-graph edges between surfaced docs directly in-prompt (v0.7.1) - Injects knowledge-graph facts as structured triples — when the user's prompt mentions entities already known to the vault,

context-surfacingresolves them via a three-path prompt-only extractor (canonical IDs, proper nouns, lowercased n-grams), queries the SPO graph for current-state triples, and appends a<vault-facts>block of rawsubject predicate objectlines to<vault-context>— off forspeed, 200 tokens onbalanced, 250 ondeep, token-truncated at the triple boundary (v0.9.0) - Session-scoped focus topic boost —

clawmem focus set "<topic>" --session-id <id>writes a per-session focus file that steers query expansion, reranking, chunk selection, snippet extraction, and post-composite-score topic boosting (1.4× match / 0.75× demote) for that session only — session-isolated, fail-open, never writes to SQLite or lifecycle columns (v0.9.0) - Scores document quality using structure, keywords, and metadata richness signals

- Boosts co-accessed documents — notes frequently surfaced together get retrieval reinforcement

- Decomposes complex queries into typed retrieval clauses (BM25/vector/graph) for multi-topic questions

- Cleans stale embeddings automatically before embed runs, removing orphans from deleted/changed documents

- Transaction-safe indexing — crash mid-index leaves zero partial state (atomic commit with rollback)

- Deduplicates hook-generated observations within a 30-minute window using normalized content hashing, preventing memory bloat from repeated hook output

- Navigates temporal neighborhoods around any document via the

timelinetool — progressive disclosure from search to chronological context to full content - Boosts frequently-revised memories — documents with higher revision counts get a durability signal in composite scoring (capped at 10%)

- Supports pin/snooze lifecycle for persistent boosts and temporary suppression

- Manages document lifecycle — policy-driven archival sweeps with restore capability

- Auto-routes queries via

memory_retrieve— classifies intent and dispatches to the optimal search backend - Syncs project issues from Beads issue trackers into searchable memory

- Runs a quiet-window heavy maintenance lane — a second consolidation worker, off by default behind

CLAWMEM_HEAVY_LANE=true, that runs on a longer interval only inside a configurable hour window. Gated bycontext_usagequery-rate so it never competes for CPU/GPU with interactive sessions, scoped exclusively via DB-backedworker_leases, stale-first by default with an optional surprisal selector, and journals every attempt inmaintenance_runsfor operator visibility (v0.8.0)

Runs fully local with no API keys and no cloud services. Integrates via Claude Code hooks and MCP tools, as a native OpenClaw plugin, or as a Hermes Agent MemoryProvider plugin. All modes share the same vault for cross-runtime memory. Works with any MCP-compatible client.

Full version history is in RELEASE_NOTES.md. Upgrade instructions for existing vaults are in docs/guides/upgrading.md.

Architecture

Install

Platform Support

| Platform | Status | Notes |

|---|---|---|

| Linux | Full support | Primary target. systemd services for watcher + embed timer. |

| macOS | Full support | Homebrew SQLite handled automatically. GPU via Metal (llama.cpp). |

| Windows (WSL2) | Full support | Recommended for Windows users. Install Bun + ClawMem inside WSL2. |

| Windows (native) | Not recommended | Bun and sqlite-vec work, but bin/clawmem wrapper is bash, hooks expect bash commands, and systemd services have no equivalent. Use WSL2 instead. |

Prerequisites

Required:

- Bun v1.0+ — runtime for ClawMem. On Linux, install via

curl -fsSL https://bun.sh/install | bash(not snap — snap Bun cannot read stdin, which breaks hooks). - SQLite with FTS5 — included with Bun. On macOS, install

brew install sqlitefor extension loading support (ClawMem detects and uses Homebrew SQLite automatically).

Optional (for better performance):

- llama.cpp (

llama-server) — for dedicated GPU inference. Without it,node-llama-cppruns models in-process (auto-downloads on first use). GPU servers give better throughput and prevent silent CPU fallback. - systemd (Linux) or launchd (macOS) — for persistent background services (watcher, embed timer, GPU servers). ClawMem ships systemd unit templates; macOS users can create equivalent launchd plists. See systemd services.

Optional integrations:

- Claude Code — for hooks + MCP integration

- OpenClaw — for native plugin integration

- Hermes Agent — for

MemoryProviderplugin integration - bd CLI v0.58.0+ — for Beads issue tracker sync (only if using Beads)

Install from npm (recommended)

npm install -g clawmem

If you use Bun as your package manager:

bun add -g clawmem

Install from source

git clone https://github.com/yoloshii/clawmem.git ~/clawmem

cd ~/clawmem && bun install

ln -sf ~/clawmem/bin/clawmem ~/.bun/bin/clawmem

Setup roadmap

After installing, here's the full journey from zero to working memory:

| Step | What | How | Details |

|---|---|---|---|

| 1. Bootstrap | Create a vault, index your first collection, embed, install hooks and MCP | clawmem bootstrap ~/notes --name notes |

One command does it all. Or run each step manually (see below). |

| 2. Choose models | Pick embedding + reranker models based on your hardware | 12GB+ VRAM → SOTA stack (zembed-1 + zerank-2). Less → QMD native combo. No GPU → cloud embedding or CPU fallback. | GPU Services |

| 3. Download models | Get the GGUF files for your chosen stack | wget from HuggingFace, or let node-llama-cpp auto-download the QMD native models on first use |

Embedding, LLM Server, Reranker Server |

| 4. Start services | Run GPU servers (if using dedicated GPU) and background services. Optionally enable the v0.8.2 background maintenance workers in the watcher unit so consolidation + deductive synthesis run automatically. | llama-server for each model. systemd units for watcher + embed timer. Drop-in for the watcher to enable workers + tune intervals + set the quiet window. |

systemd services, background workers |

| 5. Decide what to index | Add collections for your projects, notes, research, and domain docs | clawmem collection add ~/project --name project |

The more relevant markdown you index, the better retrieval works. See building a rich context field. |

| 6. Connect your agent | Hook into Claude Code, OpenClaw, Hermes, or any MCP client | clawmem setup hooks && clawmem setup mcp for Claude Code. clawmem setup openclaw for OpenClaw. Copy src/hermes/ to Hermes plugins for Hermes. |

Integration |

| 7. Verify | Confirm everything is working | clawmem doctor (full health check) or clawmem status (quick index stats) |

Verify Installation |

Fastest path: Step 1 alone gets you a working system with in-process CPU/GPU inference and default models — no manual model downloads or service configuration needed. Steps 2-4 are optional upgrades for better performance. Steps 5-6 are where you customize what gets indexed and how your agent connects.

Customize what gets indexed: Each collection has a pattern field in ~/.config/clawmem/config.yaml (default: **/*.md). Tailor it per collection — index project docs, research notes, decision records, Obsidian vaults, or anything else your agents should know about. The more relevant content in the vault, the better retrieval works. See the quickstart for config examples.

Quick start commands

# One command: init + index + embed + hooks + MCP

clawmem bootstrap ~/notes --name notes

# Or step by step:

clawmem init

clawmem collection add ~/notes --name notes

clawmem update --embed

clawmem setup hooks

clawmem setup mcp

# Add more collections (the more you index, the richer retrieval gets)

clawmem collection add ~/projects/myapp --name myapp

clawmem collection add ~/research --name research

clawmem update --embed

# Verify

clawmem doctor

Upgrading

bun update -g clawmem # or: npm update -g clawmem

Database schema migrates automatically on next startup (new tables and columns are added via CREATE IF NOT EXISTS / ALTER TABLE ADD COLUMN).

After major version updates (e.g. 0.1.x → 0.2.0) that add new enrichment pipelines, run a full enrichment pass to backfill existing documents:

clawmem reindex --enrich # Full enrichment: entity extraction + links + evolution for all docs

clawmem embed # Re-embed if upgrading embedding models (not needed for most updates)

--enrich forces the complete A-MEM pipeline (entity extraction, link generation, memory evolution) on all documents, not just new ones. Without it, reindex only refreshes metadata for existing docs.

Routine patch updates (e.g. 0.2.0 → 0.2.1) do not require reindexing.

For version-specific upgrade notes (opt-in features, optional cleanup steps, verification commands), see docs/guides/upgrading.md.

Integration

Claude Code

ClawMem integrates via hooks (settings.json) and an MCP stdio server. Hooks handle 90% of retrieval automatically - the agent never needs to call tools for routine context.

clawmem setup hooks # Install lifecycle hooks (SessionStart, UserPromptSubmit, Stop, PreCompact)

clawmem setup mcp # Register MCP server in ~/.claude.json (31 tools)

Automatic (90%): context-surfacing injects relevant memory on every prompt. postcompact-inject re-injects state after compaction. decision-extractor, handoff-generator, feedback-loop capture session state on stop.

Agent-initiated (10%): MCP tools (query, intent_search, find_causal_links, timeline, etc.) for targeted retrieval when hooks don't surface what's needed.

OpenClaw

ClawMem registers as a native OpenClaw memory plugin (kind: memory, v0.10.0+). Same 90/10 automatic retrieval, delivered through OpenClaw's plugin-hook bus instead of Claude Code hooks.

# v0.10.4+: profile-aware. Delegates to `openclaw plugins install --force` when the OpenClaw CLI

# is on PATH (auto-enables the plugin, honors OPENCLAW_STATE_DIR, OPENCLAW_CONFIG_PATH, --profile).

# Falls back to a recursive copy honoring OPENCLAW_STATE_DIR when the CLI is absent.

clawmem setup openclaw

# Custom profile (e.g. dev profile at ~/.openclaw-dev):

OPENCLAW_STATE_DIR=~/.openclaw-dev clawmem setup openclaw

What the plugin provides:

before_prompt_buildhook (load-bearing) - prompt-aware retrieval (context-surfacing + session-bootstrap) AND the pre-emptiveprecompact-extractrun when token usage approaches the compaction threshold. This is the authoritative path for precompact state capture because it runs synchronously before the LLM call that would trigger compaction, so it cannot race the compactor.agent_endhook - decision extraction, handoff generation, feedback loop (parallel, fire-and-forget at the OpenClaw call site). OpenClaw v2026.4.26+ also enforces a 30s default void-hook timeout onagent_end— slow handlers are logged but the underlying postrun work is not cancelled (fail-open).before_compactionhook (defense-in-depth fallback) - firesprecompact-extractagain for the rare case wherebefore_prompt_build's proximity heuristic missed a sudden token-count jump. Fire-and-forget at OpenClaw's call site, so it races the compactor and offers no correctness guarantee on its own — thebefore_prompt_buildpath is what actually holds the invariant.session_starthook - session registration + cached first-turn bootstrap context- 5 agent tools -

clawmem_search,clawmem_get,clawmem_session_log,clawmem_timeline,clawmem_similar

Disable OpenClaw's native memory search to avoid duplicate injection:

openclaw config set agents.defaults.memorySearch.extraPaths "[]"

ClawMem coexists cleanly with OpenClaw's Active Memory plugin (v2026.4.10+) and, on OpenClaw v2026.4.18+ (#65411), with the memory-core dreaming sidecar — both run alongside ClawMem instead of being mutually exclusive. They search different backends and inject into different prompt regions, so they do not conflict. See the OpenClaw plugin guide — Active Memory coexistence and the memory-core dreaming sidecar section for the two patterns.

Pair ClawMem (memory) with a context-engine plugin (v0.10.0+). OpenClaw and Hermes maintainers have converged on a two-surface plugin model: one slot for memory plugins (cross-session, retrieval-first) and a separate slot for context-engine plugins (in-session, compression/compaction-first). Under that model ClawMem is a memory layer — it has always been one in Hermes via the MemoryProvider ABC, and v0.10.0 moves the OpenClaw integration to the same semantic slot. You can now run ClawMem in the memory slot alongside an LCM-style compression plugin (for example, lossless-claw) in the context-engine slot. The two plugins do not overlap: one persists across sessions, the other reshapes the live window. See the OpenClaw plugin guide — memory vs context engine for the full rationale.

OpenClaw v2026.4.11+ recommended (required for ClawMem v0.10.0+). v2026.4.11 introduced a new plugin discovery contract that requires each plugin directory to ship a

package.jsonwithopenclaw.extensionsdeclared, and that rejects symlinked plugin directories. ClawMem v0.10.0 includes both fixes. Older ClawMem versions (< v0.10.0) on OpenClaw v2026.4.11+ will fail to discover silently — upgrade ClawMem, then re-runclawmem setup openclaw. See docs/guides/upgrading.md.

Alternative: OpenClaw agents can also use ClawMem's MCP server directly (clawmem setup mcp), with or without hooks. This gives full access to all 31 MCP tools but bypasses OpenClaw's plugin lifecycle, so you lose token budget awareness, native compaction orchestration, and the agent_end message pipeline. The native OpenClaw plugin is recommended for new setups; MCP is available as an additional or standalone integration.

Hermes Agent

ClawMem integrates as a native MemoryProvider plugin — Hermes's pluggable interface for agent memory. Same automatic retrieval and extraction, delivered through Hermes's memory lifecycle instead of Claude Code hooks.

Install:

# Preferred — user-plugin path (Hermes #10529, v2026.4.13+).

# Survives `git pull` of hermes-agent and avoids dual-registration with bundled providers.

cp -r /path/to/ClawMem/src/hermes ${HERMES_HOME:-~/.hermes}/plugins/clawmem

# Or, the bundled-style path (always supported, takes precedence on name collisions).

# Recommended only when you actively work in the hermes-agent source tree.

cp -r /path/to/ClawMem/src/hermes /path/to/hermes-agent/plugins/memory/clawmem

# Symlink alternative for in-place development (either path).

ln -s /path/to/ClawMem/src/hermes ${HERMES_HOME:-~/.hermes}/plugins/clawmem

Configure in your Hermes profile's .env or environment:

CLAWMEM_BIN=/path/to/clawmem # Path to clawmem binary (or ensure it's on PATH)

CLAWMEM_SERVE_PORT=7438 # REST API port (default: 7438)

CLAWMEM_SERVE_MODE=external # "external" (you run clawmem serve) or "managed" (plugin manages it)

CLAWMEM_PROFILE=balanced # speed | balanced | deep

Then set memory.provider: clawmem in your Hermes config.yaml, or run hermes memory setup to configure interactively.

What the plugin provides:

prefetch()— prompt-aware retrieval viacontext-surfacinghook (automatic every turn)on_session_end()— decision extraction, handoff generation, feedback loop (parallel)on_pre_compress()— pre-compaction state preservationsession-bootstrap— session registration + first-turn context injection- 5 agent tools —

clawmem_retrieve,clawmem_get,clawmem_session_log,clawmem_timeline,clawmem_similar - Plugin-managed transcript — maintains its own JSONL transcript for ClawMem hooks

Requirements: clawmem binary on PATH and clawmem serve running (external mode) or the plugin starts it automatically (managed mode). Python 3.10+. No pip dependencies beyond Hermes itself (uses urllib for REST calls, httpx optional for better performance).

Alternative: Hermes also has built-in MCP client support. You can add ClawMem as an MCP server in Hermes's config.yaml under mcp_servers for tool-only access. But this misses the lifecycle hooks (prefetch, session_end, pre_compress), so the native plugin is recommended.

See Hermes plugin guide for architecture details, lifecycle mapping, and troubleshooting.

Multi-Framework Operation

All three integrations share the same SQLite vault by default. Claude Code, OpenClaw, and Hermes can run simultaneously — decisions captured in one runtime are immediately available in the others, giving agents persistent shared memory across sessions and platforms. WAL mode + busy_timeout handles concurrent access.

Multi-Vault (Optional)

By default, ClawMem uses a single vault at ~/.cache/clawmem/index.sqlite. For users who want separate memory domains (e.g., work vs personal, or isolated vaults per project), ClawMem supports named vaults.

Configure in ~/.config/clawmem/config.yaml:

vaults:

work: ~/.cache/clawmem/work.sqlite

personal: ~/.cache/clawmem/personal.sqlite

Or via environment variable:

export CLAWMEM_VAULTS='{"work":"~/.cache/clawmem/work.sqlite","personal":"~/.cache/clawmem/personal.sqlite"}'

Using vaults with MCP tools:

All retrieval tools (memory_retrieve, query, search, vsearch, intent_search) accept an optional vault parameter. Omit it to use the default vault.

# Search the default vault (no vault param needed)

query("authentication flow")

# Search a named vault

query("project timeline", vault="work")

# List configured vaults

list_vaults()

# Sync content into a vault

vault_sync(vault="work", content_root="~/work/docs")

Single-vault users: No action needed. Everything works without configuration. The vault parameter is always optional and ignored when no vaults are configured.

GPU Services

ClawMem uses three llama-server (llama.cpp) instances for neural inference. All three have in-process fallbacks via node-llama-cpp (auto-downloads on first use), so ClawMem works without a dedicated GPU. node-llama-cpp auto-detects the best available backend — Metal on Apple Silicon, Vulkan where available, CPU as last resort. With GPU acceleration (Metal/Vulkan), in-process inference is fast for these small models (0.3B–1.7B); on CPU-only systems it is significantly slower. For production use, run the servers via systemd services to prevent silent fallback.

GPU with VRAM to spare (12GB+, recommended): ZeroEntropy's distillation-paired stack delivers best retrieval quality — total ~10GB VRAM.

| Service | Port | Model | VRAM | Purpose |

|---|---|---|---|---|

| Embedding | 8088 | zembed-1-Q4_K_M | ~4.4GB | SOTA embedding (2560d, 32K context). Distilled from zerank-2 via zELO. |

| LLM | 8089 | qmd-query-expansion-1.7B-q4_k_m | ~2.2GB | Intent classification, query expansion, A-MEM |

| Reranker | 8090 | zerank-2-Q4_K_M | ~3.3GB | SOTA reranker. Outperforms Cohere rerank-3.5. Optimal pairing with zembed-1. |

Important: zembed-1 and zerank-2 use non-causal attention — -ub must equal -b on llama-server (e.g. -b 2048 -ub 2048). See Reranker Server for details.

License: zembed-1 and zerank-2 are released under CC-BY-NC-4.0 — non-commercial only. The QMD native models below have no such restriction.

No dedicated GPU / GPU without VRAM to spare: The QMD native combo — total ~4GB VRAM, also runs via node-llama-cpp (Metal on Apple Silicon, Vulkan where available, CPU as last resort). Fast with GPU acceleration; significantly slower on CPU-only.

| Service | Port | Model | VRAM | Purpose |

|---|---|---|---|---|

| Embedding | 8088 | EmbeddingGemma-300M-Q8_0 | ~400MB | Vector search, indexing, context-surfacing (768d, 2K context) |

| LLM | 8089 | qmd-query-expansion-1.7B-q4_k_m | ~2.2GB | Intent classification, query expansion, A-MEM |

| Reranker | 8090 | qwen3-reranker-0.6B-Q8_0 | ~1.3GB | Cross-encoder reranking (query, intent_search) |

The bin/clawmem wrapper defaults to localhost:8088/8089/8090. If a server is unreachable (transport error like ECONNREFUSED/ETIMEDOUT), ClawMem sets a 60-second cooldown and falls back to in-process inference via node-llama-cpp (auto-downloads the QMD native models on first use, uses Metal/Vulkan/CPU depending on hardware). HTTP errors (400/500) and user-cancelled requests do not trigger cooldown — the remote server is retried normally on the next call. With GPU acceleration the fallback is fast; on CPU-only it is significantly slower. ClawMem always works either way, but if you're running dedicated GPU servers, use systemd services to ensure they stay up.

To prevent fallback and fail fast instead, set CLAWMEM_NO_LOCAL_MODELS=true.

Remote GPU (optional)

If your GPU lives on a separate machine, point the env vars at it:

export CLAWMEM_EMBED_URL=http://gpu-host:8088

export CLAWMEM_LLM_URL=http://gpu-host:8089

export CLAWMEM_LLM_MODEL=qwen3

export CLAWMEM_RERANK_URL=http://gpu-host:8090

For remote setups, set CLAWMEM_NO_LOCAL_MODELS=true to prevent node-llama-cpp from auto-downloading multi-GB model files if a server is unreachable.

No Dedicated GPU (in-process inference)

All three QMD native models run locally without a dedicated GPU. node-llama-cpp auto-downloads them on first use (~300MB embedding + ~1.1GB LLM + ~600MB reranker) and auto-detects the best backend — Metal on Apple Silicon (fast, uses integrated GPU), Vulkan where available (fast, uses discrete or integrated GPU), or CPU as last resort (significantly slower). With Metal or Vulkan, in-process inference handles these small models well; CPU-only is functional but noticeably slower.

Alternatively, use a cloud embedding provider if you prefer not to run models locally.

Embedding

ClawMem calls the OpenAI-compatible /v1/embeddings endpoint for all embedding operations. This works with local llama-server instances and cloud providers alike.

Option A: GPU with VRAM to spare (recommended)

Use zembed-1-Q4_K_M — SOTA retrieval quality, distilled from zerank-2 via ZeroEntropy's zELO methodology. CC-BY-NC-4.0 — non-commercial only.

- Size: 2.4GB, Dimensions: 2560, VRAM: ~4.4GB, Context: 32K tokens

wget https://huggingface.co/Abhiray/zembed-1-Q4_K_M-GGUF/resolve/main/zembed-1-Q4_K_M.gguf

# -ub must match -b for non-causal attention

llama-server -m zembed-1-Q4_K_M.gguf \

--embeddings --port 8088 --host 0.0.0.0 \

-ngl 99 -c 8192 -b 2048 -ub 2048

Option B: No GPU / GPU without VRAM to spare

Use EmbeddingGemma-300M-Q8_0 — the QMD native embedding model. Only 300MB, runs on CPU or any GPU.

- Size: 314MB, Dimensions: 768, VRAM: ~400MB (or CPU), Context: 2048 tokens

wget https://huggingface.co/ggml-org/embeddinggemma-300M-GGUF/resolve/main/embeddinggemma-300M-Q8_0.gguf

# On GPU (add -ngl 99):

llama-server -m embeddinggemma-300M-Q8_0.gguf \

--embeddings --port 8088 --host 0.0.0.0 \

-ngl 99 -c 2048 --batch-size 2048

# On CPU (omit -ngl):

llama-server -m embeddinggemma-300M-Q8_0.gguf \

--embeddings --port 8088 --host 0.0.0.0 \

-c 2048 --batch-size 2048

For multilingual corpora, the SOTA zembed-1 (Option A) supports multilingual out of the box. For a lightweight alternative: granite-embedding-278m-multilingual-Q6_K (314MB, set CLAWMEM_EMBED_MAX_CHARS=1100 due to 512-token context).

Option C: Cloud Embedding API

Alternatively, use a cloud embedding provider instead of running a local server. Any provider with an OpenAI-compatible /v1/embeddings endpoint works.

Configuration: Copy .env.example to .env and set your provider credentials:

cp .env.example .env

# Edit .env:

CLAWMEM_EMBED_URL=https://api.jina.ai

CLAWMEM_EMBED_API_KEY=jina_your-key-here

CLAWMEM_EMBED_MODEL=jina-embeddings-v5-text-small

Or export them in your shell. Precedence: shell environment > .env file > bin/clawmem wrapper defaults.

| Provider | CLAWMEM_EMBED_URL |

CLAWMEM_EMBED_MODEL |

Dimensions | Notes |

|---|---|---|---|---|

| Jina AI | https://api.jina.ai |

jina-embeddings-v5-text-small |

1024 | 32K context, task-specific LoRA adapters |

| OpenAI | https://api.openai.com |

text-embedding-3-small |

1536 | 8K context, Matryoshka dimensions via CLAWMEM_EMBED_DIMENSIONS |

| Voyage AI | https://api.voyageai.com |

voyage-4-large |

1024 | 32K context |

| Cohere | https://api.cohere.com |

embed-v4.0 |

1024 | 128K context |

Cloud mode auto-detects your provider from the URL and sends the right parameters (Jina task, Voyage/Cohere input_type, OpenAI dimensions). Batch embedding (50 fragments/request), server-side truncation, adaptive TPM-aware pacing, and retry with jitter are all handled automatically. Set CLAWMEM_EMBED_TPM_LIMIT to match your provider tier (default: 100000). See docs/guides/cloud-embedding.md for full details.

Note: Cloud providers handle their own context window limits — ClawMem skips client-side truncation when an API key is set. Local llama-server truncates at CLAWMEM_EMBED_MAX_CHARS (default: 6000 chars).

Verify and embed

# Verify endpoint is reachable

curl $CLAWMEM_EMBED_URL/v1/embeddings \

-H "Content-Type: application/json" \

-H "Authorization: Bearer $CLAWMEM_EMBED_API_KEY" \

-d "{\"input\":\"test\",\"model\":\"$CLAWMEM_EMBED_MODEL\"}"

# Embed your vault

./bin/clawmem embed

LLM Server

Intent classification, query expansion, and A-MEM extraction use qmd-query-expansion-1.7B — a Qwen3-1.7B finetuned by QMD specifically for generating search expansion terms (hyde, lexical, and vector variants). ~1.1GB at q4_k_m quantization, served via llama-server on port 8089.

Without a server: If CLAWMEM_LLM_URL is unset, node-llama-cpp auto-downloads the model on first use.

Performance (RTX 3090):

- Intent classification: 27ms

- Query expansion: 333 tok/s

- VRAM: ~2.2-2.8GB depending on quantization

Qwen3 /no_think flag: Qwen3 uses thinking tokens by default. ClawMem appends /no_think to all prompts automatically to get structured output in the content field.

Intent classification: Uses a dual-path approach:

- Heuristic regex classifier (instant) — handles strong signals (why/when/who keywords) with 0.8+ confidence

- LLM refinement (27ms on GPU) — only for ambiguous queries below 0.8 confidence

Server setup:

# Download the finetuned model

wget https://huggingface.co/tobil/qmd-query-expansion-1.7B-gguf/resolve/main/qmd-query-expansion-1.7B-q4_k_m.gguf

# Start llama-server for LLM inference

llama-server -m qmd-query-expansion-1.7B-q4_k_m.gguf \

--port 8089 --host 0.0.0.0 \

-ngl 99 -c 4096 --batch-size 512

Reranker Server

Cross-encoder reranking for query and intent_search pipelines on port 8090. ClawMem calls the /v1/rerank endpoint (or falls back to scoring via /v1/completions for compatible servers).

Scores each candidate against the original query (cross-encoder architecture). query pipeline: 4000 char context per doc (deep reranking); intent_search: 200 char context per doc (fast reranking).

GPU with VRAM to spare (recommended): zerank-2-Q4_K_M (2.4GB, ~3.3GB VRAM). Outperforms Cohere rerank-3.5 and Gemini 2.5 Flash. Optimal pairing with zembed-1 (same distillation architecture via zELO). CC-BY-NC-4.0 — non-commercial only.

wget https://huggingface.co/keisuke-miyako/zerank-2-gguf-q4_k_m/resolve/main/zerank-2-Q4_k_m.gguf

# -ub must match -b for non-causal attention

llama-server -m zerank-2-Q4_K_M.gguf \

--reranking --port 8090 --host 0.0.0.0 \

-ngl 99 -c 2048 -b 2048 -ub 2048

CPU / GPU without VRAM to spare: qwen3-reranker-0.6B-Q8_0 (~600MB, ~1.3GB VRAM). The QMD native reranker — auto-downloaded by node-llama-cpp if no server is running.

wget https://huggingface.co/ggml-org/Qwen3-Reranker-0.6B-Q8_0-GGUF/resolve/main/Qwen3-Reranker-0.6B-Q8_0.gguf

llama-server -m Qwen3-Reranker-0.6B-Q8_0.gguf \

--reranking --port 8090 --host 0.0.0.0 \

-ngl 99 -c 2048 --batch-size 512

Note: zerank-2 and zembed-1 use non-causal attention — -ub (ubatch) must equal -b (batch). Omitting -ub or setting it lower causes assertion crashes. qwen3-reranker-0.6B does not have this requirement. See llama.cpp#12836.

MCP Server

ClawMem exposes 31 MCP tools via the Model Context Protocol and an optional HTTP REST API. Any MCP-compatible client or HTTP client can use it.

Claude Code (automatic):

./bin/clawmem setup mcp # Registers in ~/.claude.json

Manual (any MCP client):

Add to your MCP config (e.g. ~/.claude.json, claude_desktop_config.json, or your client's equivalent):

{

"mcpServers": {

"clawmem": {

"command": "/absolute/path/to/clawmem/bin/clawmem",

"args": ["mcp"]

}

}

}

The server runs via stdio — no network port needed. The bin/clawmem wrapper sets the GPU endpoint env vars automatically.

Verify: After registering, your client should see tools including memory_retrieve, search, vsearch, query, query_plan, intent_search, timeline, etc.

HTTP REST API (optional)

For web dashboards, non-MCP agents, cross-machine access, or programmatic use:

./bin/clawmem serve # localhost:7438, no auth

./bin/clawmem serve --port 8080 # custom port

CLAWMEM_API_TOKEN=secret ./bin/clawmem serve # with bearer token auth

Endpoints:

| Method | Path | Description |

|---|---|---|

| GET | /health |

Liveness probe + version + doc count |

| GET | /stats |

Full index statistics |

| POST | /search |

Unified search (mode: auto/keyword/semantic/hybrid) |

| POST | /retrieve |

Smart retrieve with auto-routing (mode: auto/keyword/semantic/causal/timeline/hybrid) |

| GET | /documents/:docid |

Single document by 6-char hash prefix |

| GET | /documents?pattern=... |

Multi-get by glob pattern |

| GET | /timeline/:docid |

Temporal neighborhood (before/after) |

| GET | /sessions |

Recent session history |

| GET | /collections |

List all collections |

| GET | /lifecycle/status |

Active/archived/pinned/snoozed counts |

| POST | /documents/:docid/pin |

Pin/unpin |

| POST | /documents/:docid/snooze |

Snooze until date |

| POST | /documents/:docid/forget |

Deactivate |

| POST | /lifecycle/sweep |

Archive stale docs (dry_run default) |

| GET | /graph/causal/:docid |

Causal chain traversal |

| GET | /graph/similar/:docid |

k-NN neighbors |

| GET | /export |

Full vault export as JSON |

| POST | /reindex |

Trigger re-scan |

| POST | /graphs/build |

Rebuild temporal + semantic graphs |

Auth: Set CLAWMEM_API_TOKEN env var to require Authorization: Bearer <token> on all requests. If unset, access is open (localhost-only by default). See .env.example.

Search example:

curl -X POST http://localhost:7438/search \

-H 'Content-Type: application/json' \

-d '{"query": "authentication decisions", "mode": "hybrid", "compact": true}'

Verify Installation

./bin/clawmem doctor # Full health check

./bin/clawmem status # Quick index status

bun test # Run test suite

Agent Instructions

ClawMem ships three instruction files and an optional maintenance agent:

| File | Loaded | Purpose |

|---|---|---|

CLAUDE.md |

Automatically (Claude Code, when working in this repo) | Complete operational reference — hooks, tools, query optimization, scoring, pipeline details, troubleshooting |

AGENTS.md |

Framework-dependent | Identical to CLAUDE.md — cross-framework compatibility (Cursor, Windsurf, Codex, etc.) |

SKILL.md |

On-demand (agent reads when needed) | Same reference as CLAUDE.md, shipped with the package for cross-project use |

agents/clawmem-curator.md |

On-demand via clawmem setup curator |

Maintenance agent — lifecycle triage, retrieval health checks, dedup sweeps, graph rebuilds |

Working in the ClawMem repo: No action needed — CLAUDE.md loads automatically.

Using ClawMem from other projects: Your agent needs instructions on how to use ClawMem's hooks and MCP tools. Two options:

Option A: Copy instructions into your project

Copy the contents of CLAUDE.md (or the relevant sections) into your project's own CLAUDE.md or AGENTS.md. Simple but requires manual updates when ClawMem changes.

Option B: Add a trigger block (recommended)

Add this minimal trigger block to your global ~/.claude/CLAUDE.md. It gives the agent routing rules always loaded, and tells it how to find the full reference (SKILL.md) shipped with your installation when deeper guidance is needed:

## ClawMem

Architecture: hooks (automatic, ~90%) + MCP tools (explicit, ~10%).

Vault: `~/.cache/clawmem/index.sqlite` | Config: `~/.config/clawmem/config.yaml`

### Escalation Gate (3 rules — ONLY escalate to MCP tools when one fires)

1. **Low-specificity injection** — `<vault-context>` is empty or lacks the specific fact needed

2. **Cross-session question** — "why did we decide X", "what changed since last time"

3. **Pre-irreversible check** — before destructive or hard-to-reverse changes

### Tool Routing (once escalated)

**Preferred:** `memory_retrieve(query)` — auto-classifies and routes to the optimal backend.

**Direct routing** (when calling specific tools):

"why did we decide X" → intent_search(query) NOT query()

"what happened last session" → session_log() NOT query()

"what else relates to X" → find_similar(file) NOT query()

Complex multi-topic → query_plan(query) NOT query()

General recall → query(query, compact=true)

Keyword spot check → search(query, compact=true)

Conceptual/fuzzy → vsearch(query, compact=true)

Full content → multi_get("path1,path2")

Lifecycle health → lifecycle_status()

Stale sweep → lifecycle_sweep(dry_run=true)

Restore archived → lifecycle_restore(query)

ALWAYS `compact=true` first → review → `multi_get` for full content.

### Proactive Use (no escalation gate needed)

- User says "remember this" / critical decision made → `memory_pin(query)` immediately

- User corrects a misconception → `memory_pin(query)` the correction

- `<vault-context>` surfaces irrelevant/noisy content → `memory_snooze(query, until)` for 30 days

- Need to correct a memory → `memory_forget(query)`

- After bulk ingestion → `build_graphs`

### Anti-Patterns

- Do NOT use `query()` for everything — match query type to tool, or use `memory_retrieve`

- Do NOT call query/intent_search every turn — 3 rules above are the only gates

- Do NOT re-search what's already in `<vault-context>`

- Do NOT pin everything — pin is for persistent high-priority items, not routine decisions

- Do NOT forget memories to "clean up" — let confidence decay handle it

- Do NOT wait for curator to pin decisions — pin immediately when critical

For detailed operational guidance (query optimization, troubleshooting, collection setup, embedding workflow, graph building, curator), find and read the shipped SKILL.md:

Bash: CLAWMEM_ROOT=$(cd "$(dirname "$(which clawmem)")/.." && pwd) && echo "$CLAWMEM_ROOT/SKILL.md"

Then: Read the file at that path.

This gives your agent the 3-rule gate, tool routing, and proactive behaviors always loaded. When it needs deeper guidance, it locates and reads the full SKILL.md reference shipped with your installation — no symlinks or skill registration required.

CLI Reference

clawmem init Create DB + config

clawmem bootstrap <vault> [--name N] [--skip-embed] One-command setup

clawmem collection add <path> --name <name> Add a collection

clawmem collection list List collections

clawmem collection remove <name> Remove a collection

clawmem update [--pull] [--embed] Incremental re-scan

clawmem mine <dir> [-c name] [--embed] [--synthesize] Import conversation exports (--synthesize runs post-import LLM fact extraction, v0.7.2)

clawmem embed [-f] Generate fragment embeddings

clawmem reindex [--force] Full re-index

clawmem watch File watcher daemon

clawmem search <query> [-n N] [--json] BM25 keyword search

clawmem vsearch <query> [-n N] [--json] Vector semantic search

clawmem query <query> [-n N] [--json] Full hybrid pipeline

clawmem profile Show user profile

clawmem profile rebuild Force profile rebuild

clawmem update-context Regenerate per-folder CLAUDE.md

clawmem list [-n/--limit N] [-c col] [--json] Browse recent documents

clawmem budget [--session ID] Token utilization

clawmem log [--last N] Session history

clawmem hook <name> Manual hook trigger

clawmem surface --context --stdin IO6: pre-prompt context injection

clawmem surface --bootstrap --stdin IO6: per-session bootstrap injection

clawmem reflect [N] Cross-session reflection (last N days, default 14)

clawmem consolidate [--dry-run] [N] Find and archive duplicate low-confidence docs

clawmem install-service [--enable] [--remove] Systemd watcher service

clawmem setup hooks [--remove] Install/remove Claude Code hooks

clawmem setup mcp [--remove] Register/remove MCP server

clawmem setup curator [--remove] Install/remove curator maintenance agent

clawmem mcp Start stdio MCP server

clawmem serve [--port 7438] [--host 127.0.0.1] Start HTTP REST API server

clawmem path Print database path

clawmem doctor Full health check

clawmem status Quick index status

MCP Tools (31)

Registered by clawmem setup mcp. Available to any MCP-compatible client.

| Tool | Description |

|---|---|

__IMPORTANT |

Workflow guide: prefer memory_retrieve → match query type to tool → multi_get for full content |

Core Search & Retrieval

| Tool | Description |

|---|---|

memory_retrieve |

Preferred entry point. Auto-classifies query and routes to optimal backend (query, intent_search, session_log, find_similar, or query_plan). Use instead of manually choosing a search tool. |

search |

BM25 keyword search — for exact terms, config names, error codes, filenames. Composite scoring + co-activation boost + compact mode. Collection filter supports comma-separated values. Prefer memory_retrieve for auto-routing. |

vsearch |

Vector semantic search — for conceptual/fuzzy matching when exact keywords are unknown. Composite scoring + co-activation boost + compact mode. Collection filter supports comma-separated values. Prefer memory_retrieve for auto-routing. |

query |

Full hybrid pipeline (BM25 + vector + rerank) — general-purpose when query type is unclear. WRONG for "why" questions (use intent_search) or cross-session queries (use session_log). Prefer memory_retrieve for auto-routing. Intent hint, strong-signal bypass, chunk dedup, candidateLimit, MMR diversity, compact mode. |

get |

Retrieve single document by path or docid |

multi_get |

Retrieve multiple docs by glob or comma-separated list |

find_similar |

USE THIS for "what else relates to X", "show me similar docs". Finds k-NN vector neighbors — discovers connections beyond keyword overlap that search/query cannot find. |

Intent-Aware Search

| Tool | Description |

|---|---|

intent_search |

USE THIS for "why did we decide X", "what caused Y", "who worked on Z". Classifies intent (WHY/WHEN/ENTITY/WHAT), traverses causal + semantic graph edges. Returns decision chains that query() cannot find. |

query_plan |

USE THIS for complex multi-topic queries ("tell me about X and also Y", "compare A with B"). Decomposes into parallel typed clauses (bm25/vector/graph), executes each, merges via RRF. query() searches as one blob — this tool splits topics and routes each optimally. |

intent_search pipeline: Query → Intent Classification → BM25 + Vector → Intent-Weighted RRF → Graph Expansion (WHY/ENTITY intents) → Cross-Encoder Reranking → Composite Scoring

query_plan pipeline: Query → LLM decomposition into 2-4 typed clauses → Parallel execution (BM25/vector/graph per clause) → RRF merge across clauses → Composite scoring. Falls back to single-query for simple inputs.

Multi-Graph & Causal

| Tool | Description |

|---|---|

build_graphs |

Build temporal and/or semantic graphs from document corpus |

find_causal_links |

Trace decision chains: "what led to X", "how we got from A to B". Follow up intent_search with this tool on a top result to walk the full causal chain. Traverses causes / caused_by / both up to N hops with depth-annotated reasoning. |

kg_query |

Query the SPO knowledge graph: "what does X relate to?", "what was true about X when?". Returns temporal entity-relationship triples with validity windows. Accepts entity name (resolved via searchEntities) or canonical ID in vault:type:slug form. Triples are populated by the decision-extractor hook from observer-emitted <triples> blocks. |

memory_evolution_status |

Show how a document's A-MEM metadata evolved over time |

timeline |

Show the temporal neighborhood around a document — what was created/modified before and after it. Progressive disclosure: search → timeline (context) → get (full content). Supports same-collection scoping and session correlation. |

Beads Integration

| Tool | Description |

|---|---|

beads_sync |

Sync Beads issues from Dolt backend (bd CLI) into memory: creates docs, bridges all dep types to memory_relations, runs A-MEM enrichment |

Vault Management

| Tool | Description |

|---|---|

list_vaults |

Show configured vault names and paths. Empty in single-vault mode. |

vault_sync |

Index markdown from a directory into a named vault. Restricted-path validation rejects sensitive directories. |

Agent Diary

| Tool | Description |

|---|---|

diary_write |

Write a diary entry. Use for recording important events, decisions, or observations in environments without hook support. Stored as searchable memories. |

diary_read |

Read recent diary entries. Filter by agent name. |

Memory Management & Lifecycle

| Tool | Description |

|---|---|

memory_forget |

Search → deactivate closest match (with audit trail) |

memory_pin |

Pin a memory for +0.3 composite boost. USE PROACTIVELY when: user states a persistent constraint, makes an architecture decision, or corrects a misconception. Don't wait for curator — pin critical decisions immediately. |

memory_snooze |

Temporarily hide a memory from context surfacing until a date. USE PROACTIVELY when <vault-context> repeatedly surfaces irrelevant content — snooze for 30 days instead of ignoring it. |

status |

Index health with content type distribution |

README truncated. Continue reading on GitHub