junhoyeo/tokscale

🛰️ A CLI tool for tracking token usage from OpenCode, Claude Code, 🦞OpenClaw (Clawdbot/Moltbot), Pi, Codex, Gemini, Cursor, AmpCode, Factory Droid, Kimi, and more! • 🏅Global Leaderboard + 2D/3D Contributions Graph

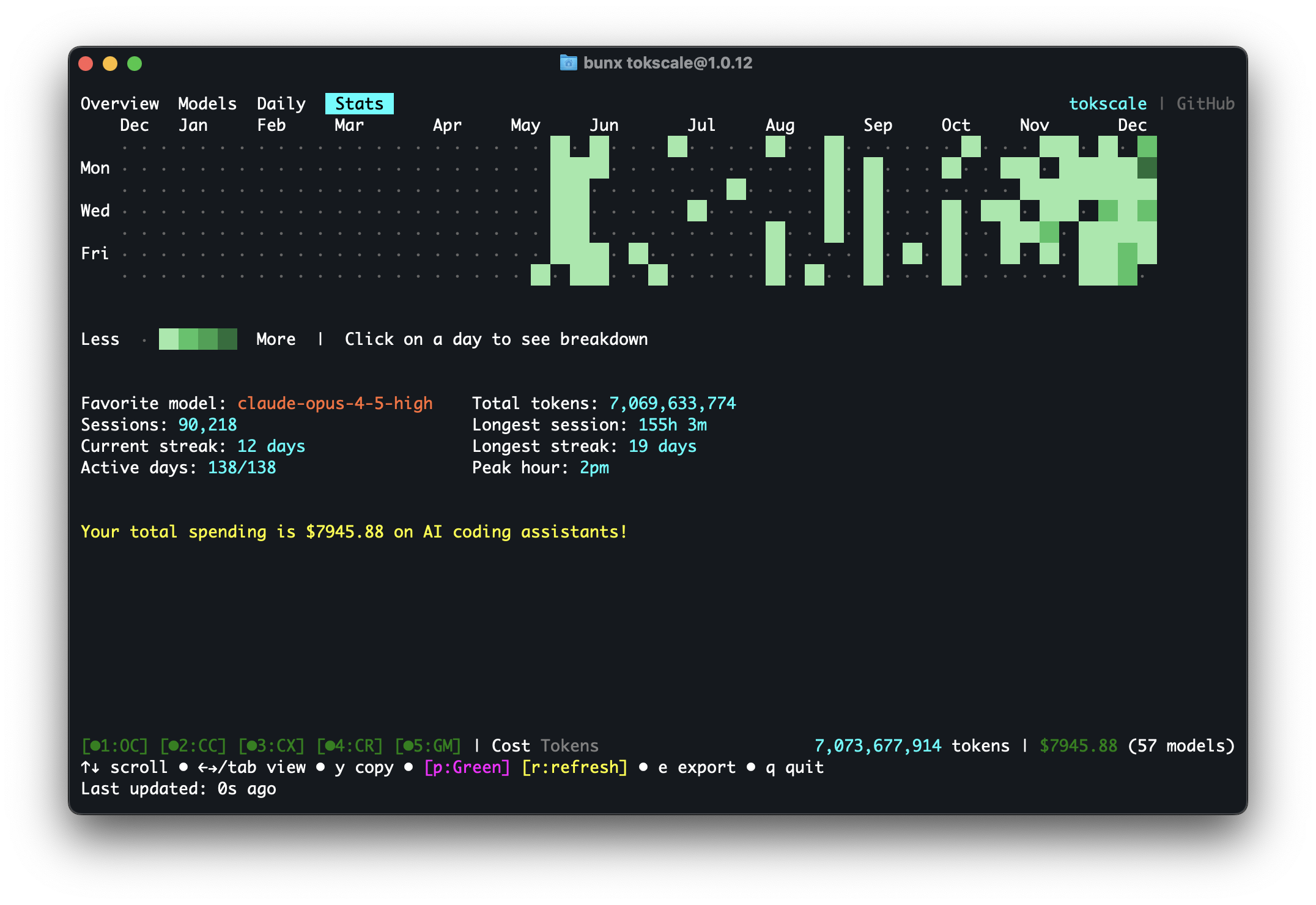

Tokscale is a high-performance CLI tool and visualization dashboard designed to track token consumption and costs across various AI coding agents. It functions by scanning local databases, session logs, and configuration directories to aggregate usage data from multiple providers into a unified interface. The tool features a native Rust TUI for real-time monitoring and supports a web-based frontend with 3D contribution graphs. It enables developers to audit their spending and usage patterns across a wide range of platforms including Hermes Agent, Claude Code, and GitHub Copilot.

- Tracks token usage and costs across multiple AI coding agents

- Features a native Rust TUI and 3D visualization dashboard

- Supports integrations for Hermes, Claude, Gemini, and Cursor

full readme from github

A high-performance CLI tool and visualization dashboard for tracking token usage and costs across multiple AI coding agents.

[!TIP]

v2 is here — native Rust TUI, cross-platform support, and more.

I drop new open-source work every week. Don't miss the next one.

Follow @junhoyeo on GitHub for more projects. Hacking on AI, infra, and everything in between. Come hang out in our Discord — and surround yourself with the world's top-tier vibers.

🇺🇸 English | 🇰🇷 한국어 | 🇯🇵 日本語 | 🇨🇳 简体中文

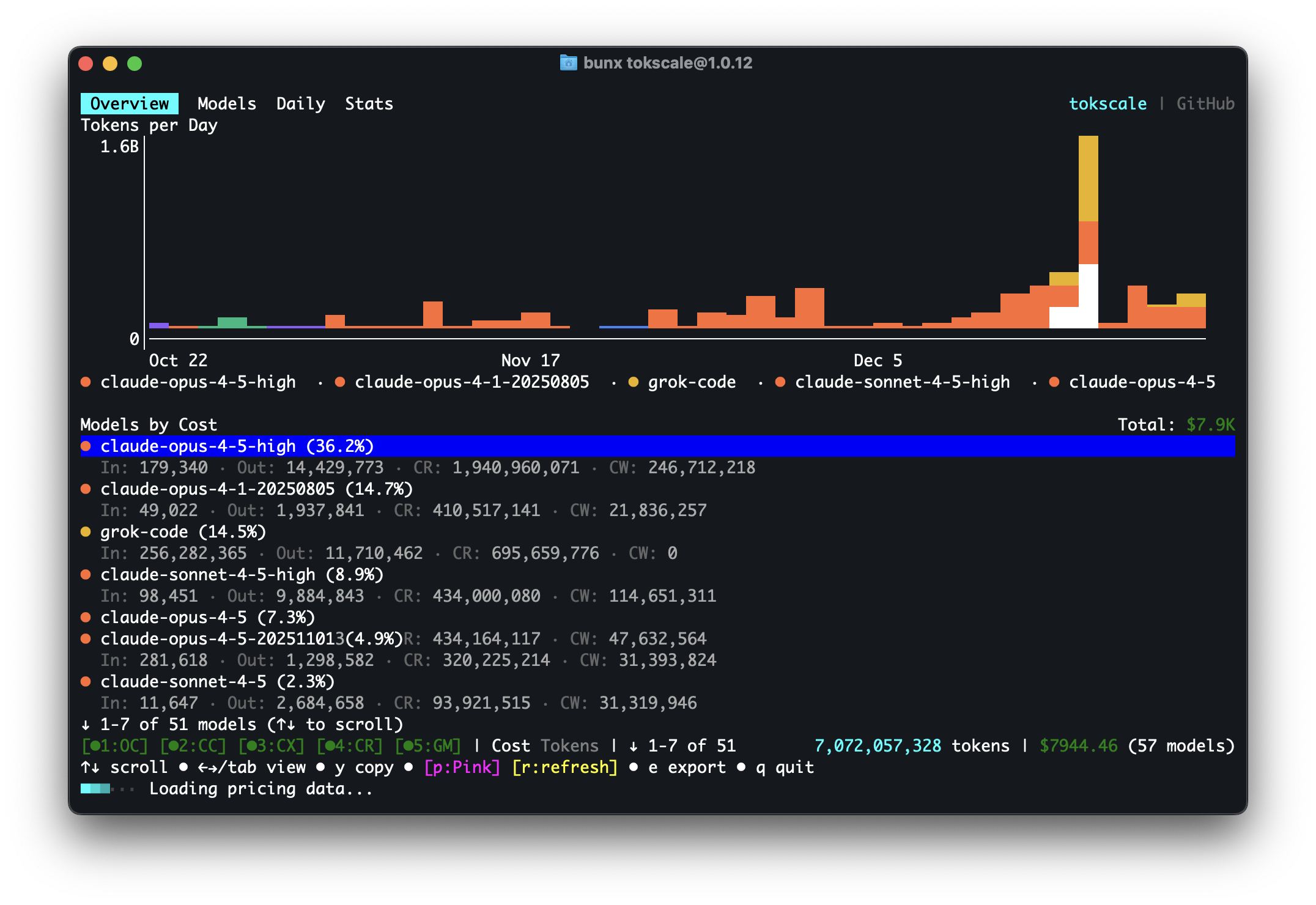

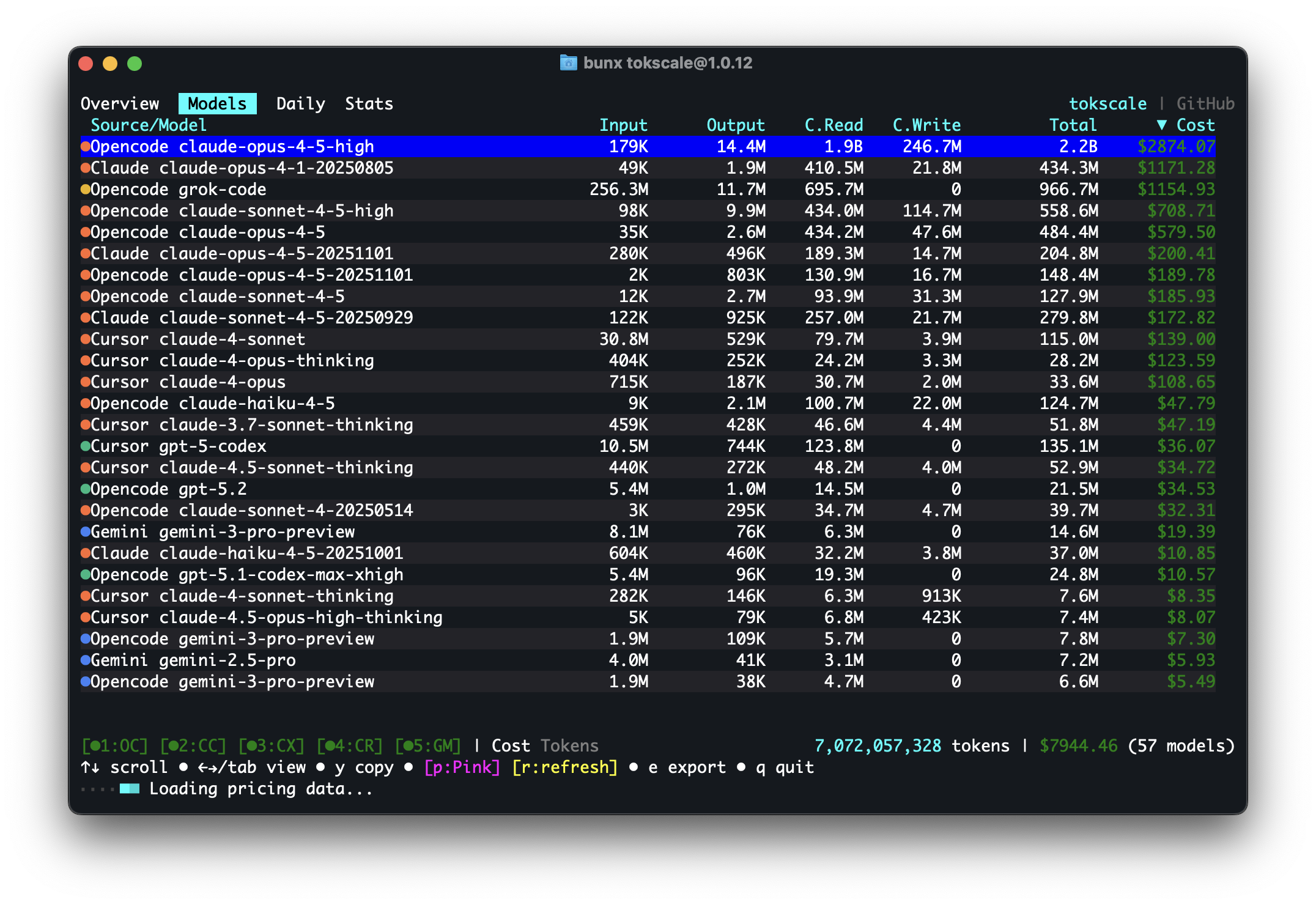

| Overview | Models |

|---|---|

|

|

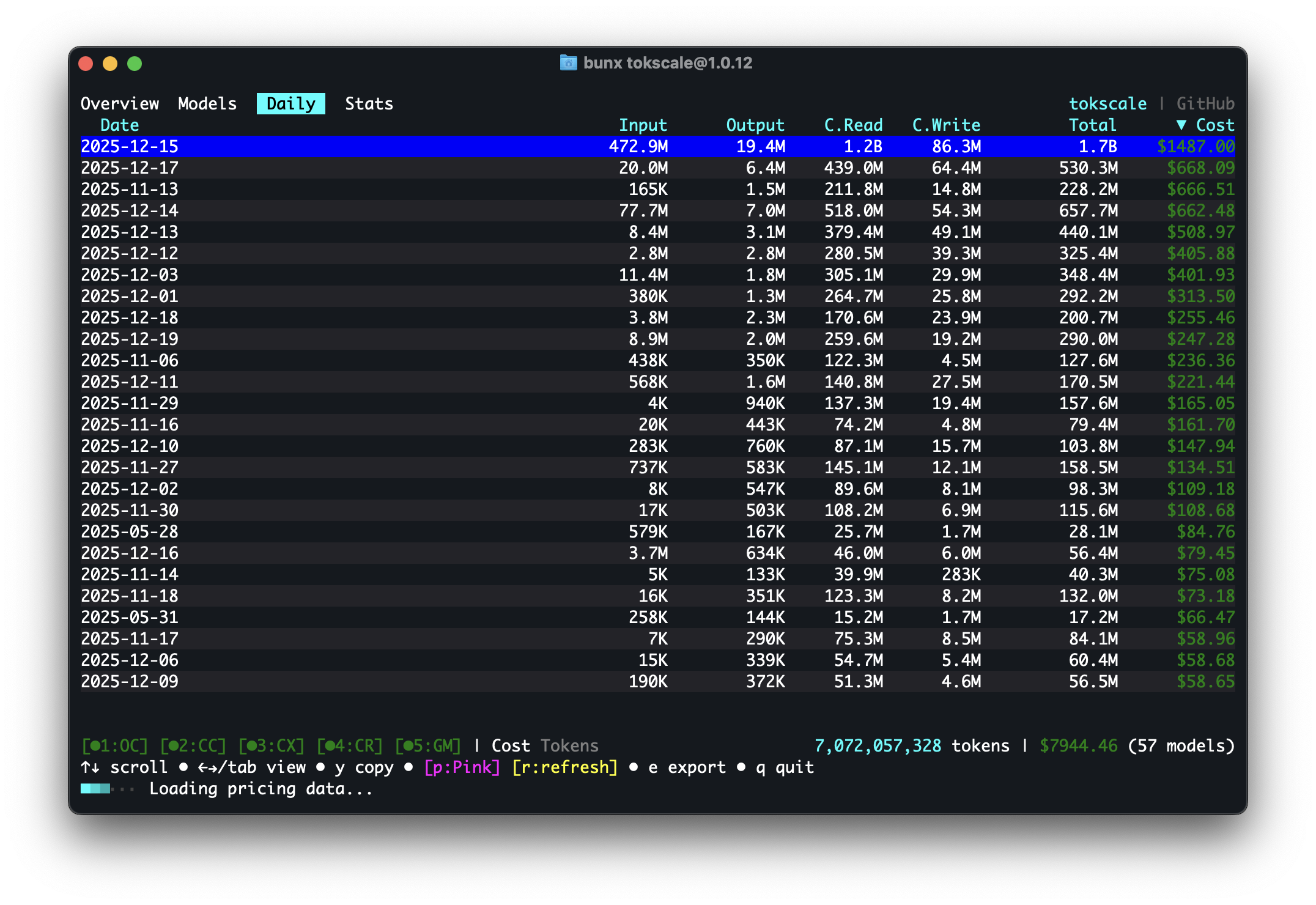

| Daily Summary | Stats |

|---|---|

|

|

| Frontend (3D Contributions Graph) | Wrapped 2025 |

|---|---|

|

|

Run

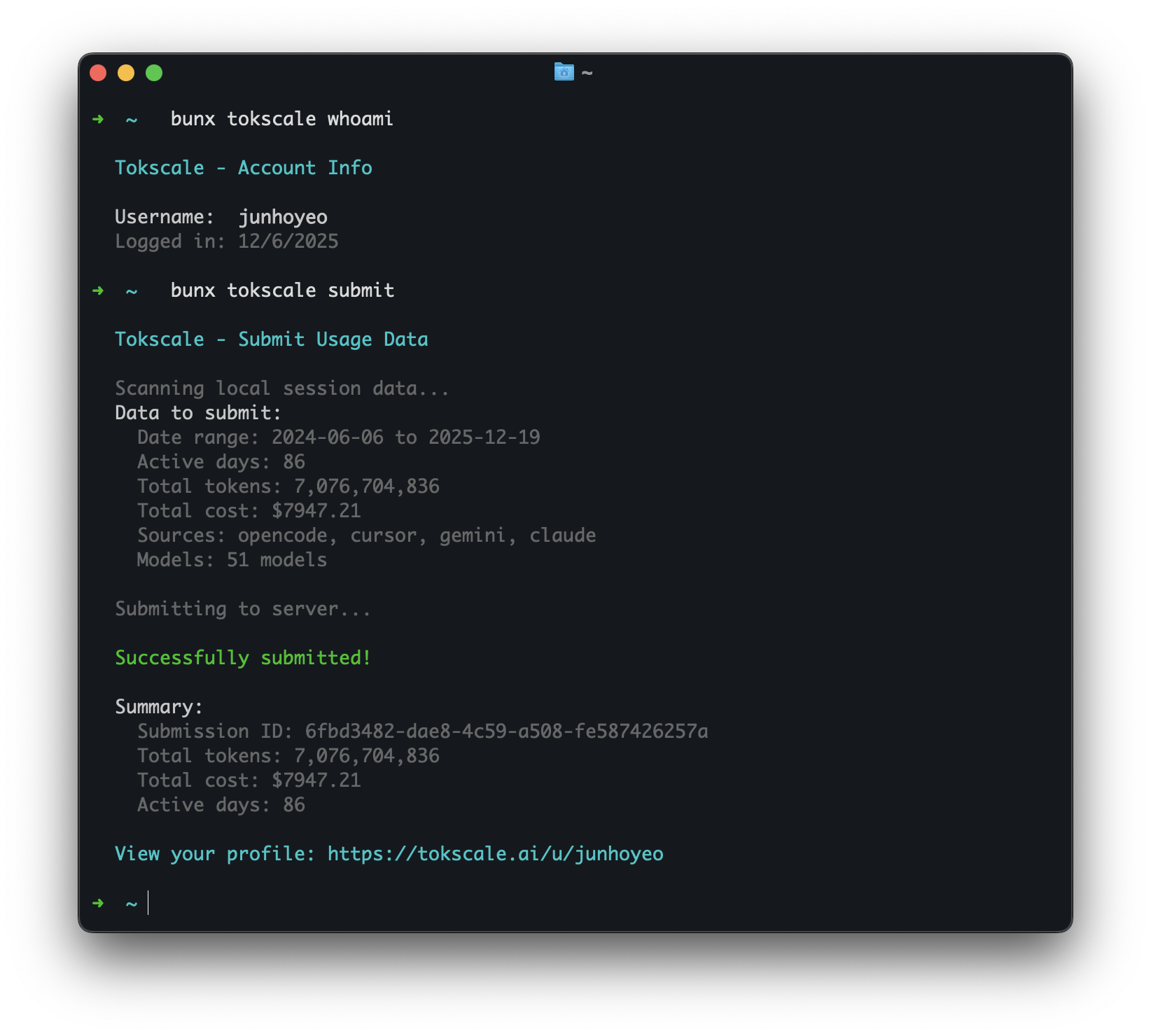

bunx tokscale@latest submitto submit your usage data to the leaderboard and create your public profile!

Overview

Tokscale helps you monitor and analyze your token consumption from:

| Logo | Client | Data Location | Supported |

|---|---|---|---|

|

OpenCode | ~/.local/share/opencode/opencode.db (1.2+, all channels including opencode-stable.db) or/and ~/.local/share/opencode/storage/message/ (legacy/unmigrated) |

✅ Yes |

|

Claude Code | ~/.claude/projects/ and ~/.claude/transcripts/ |

✅ Yes |

|

OpenClaw | ~/.openclaw/agents/ (+ legacy: .clawdbot, .moltbot, .moldbot) |

✅ Yes |

|

Codex CLI | ~/.codex/sessions/ |

✅ Yes |

|

GitHub Copilot CLI | ~/.copilot/otel/*.jsonl (+ COPILOT_OTEL_FILE_EXPORTER_PATH) |

✅ Yes |

|

Hermes Agent | $HERMES_HOME/state.db (fallback: ~/.hermes/state.db) |

✅ Yes |

|

Gemini CLI | $GEMINI_CLI_HOME/tmp/*/chats/*.json (fallback: ~/.gemini/tmp/*/chats/*.json) |

✅ Yes |

|

Cursor IDE | Cursor API export cached at ~/.config/tokscale/cursor-cache/usage*.csv (not ~/.cursor) |

✅ Yes |

|

Amp (AmpCode) | ~/.local/share/amp/threads/ |

✅ Yes |

|

Codebuff | ~/.config/manicode/ (+ manicode-dev, manicode-staging; override via CODEBUFF_DATA_DIR) |

✅ Yes |

|

Droid (Factory Droid) | ~/.factory/sessions/ |

✅ Yes |

|

Pi | ~/.pi/agent/sessions/ and ~/.omp/agent/sessions/ (Oh My Pi) |

✅ Yes |

|

Kimi CLI | ~/.kimi/sessions/ |

✅ Yes |

|

Qwen CLI | ~/.qwen/projects/ |

✅ Yes |

|

Roo Code | ~/.config/Code/User/globalStorage/rooveterinaryinc.roo-cline/tasks/ (+ server: ~/.vscode-server/data/User/globalStorage/rooveterinaryinc.roo-cline/tasks/) |

✅ Yes |

|

Kilo | ~/.config/Code/User/globalStorage/kilocode.kilo-code/tasks/ (+ server: ~/.vscode-server/data/User/globalStorage/kilocode.kilo-code/tasks/) |

✅ Yes |

|

Kilo CLI | ~/.local/share/kilo/kilo.db |

✅ Yes |

|

Mux | ~/.mux/sessions/ |

✅ Yes |

|

Crush | $XDG_DATA_HOME/crush/projects.json (project registry; fallback: ~/.local/share/crush/projects.json) |

✅ Yes |

|

Goose | ~/.local/share/goose/sessions/sessions.db (+ macOS Application Support, legacy Block/goose paths; override via GOOSE_PATH_ROOT) |

✅ Yes |

|

Google Antigravity | Cached via tokscale antigravity sync to ~/.config/tokscale/antigravity-cache/sessions/*.jsonl (live RPC against the local language server) |

✅ Yes |

|

Trae IDE / Trae Solo (international) | Cached via tokscale trae sync to ~/.config/tokscale/trae-cache/sessions/*.json (account-level usage from the official API) |

✅ Yes |

|

Zed Agent | ~/.local/share/zed/threads/threads.db (macOS: ~/Library/Application Support/Zed/threads/threads.db; Windows: %LOCALAPPDATA%/Zed/threads/threads.db; hosted Zed models only, not external ACP agents) |

✅ Yes |

| Kiro | Kiro | ~/.kiro/sessions/cli/*.json (+ *.jsonl) and ~/.local/share/kiro-cli/data.sqlite3 (macOS: ~/Library/Application Support/kiro-cli/data.sqlite3) |

✅ Yes |

|

Synthetic | Re-attributed from other sources via hf: model prefix or synthetic provider (+ Octofriend: ~/.local/share/octofriend/sqlite.db) |

✅ Yes |

Get real-time pricing calculations using 🚅 LiteLLM's pricing data, with support for tiered pricing models and cache token discounts.

Why "Tokscale"?

This project is inspired by the Kardashev scale, a method proposed by astrophysicist Nikolai Kardashev to measure a civilization's level of technological advancement based on its energy consumption. A Type I civilization harnesses all energy available on its planet, Type II captures the entire output of its star, and Type III commands the energy of an entire galaxy.

In the age of AI-assisted development, tokens are the new energy. They power our reasoning, fuel our productivity, and drive our creative output. Just as the Kardashev scale tracks energy consumption at cosmic scales, Tokscale measures your token consumption as you scale the ranks of AI-augmented development. Whether you're a casual user or burning through millions of tokens daily, Tokscale helps you visualize your journey up the scale—from planetary developer to galactic code architect.

Contents

- Overview

- Features

- Installation

- Usage

- Frontend Visualization

- Social Platform

- Wrapped 2025

- Development

- Supported Platforms

- Session Data Retention

- Data Sources

- Pricing

- Contributing

- Acknowledgments

- License

Features

- Interactive TUI Mode - Beautiful terminal UI powered by Ratatui (default mode)

- 6 interactive views: Overview, Models, Daily, Hourly, Stats, Agents (plus an optional Minutely view, opt-in via

minutelyTabEnabled) - Keyboard & mouse navigation

- GitHub-style contribution graph with 9 color themes

- Real-time filtering and sorting

- Zero flicker rendering

- 6 interactive views: Overview, Models, Daily, Hourly, Stats, Agents (plus an optional Minutely view, opt-in via

- Multi-platform support - Track usage across OpenCode, Claude Code, Codex CLI, Copilot CLI, Cursor IDE, Gemini CLI, Amp, Codebuff, Droid, OpenClaw, Hermes Agent, Pi, Kimi CLI, Qwen CLI, Roo Code, Kilo, Mux, Kilo CLI, Crush, Goose, Antigravity, Zed, Kiro, Trae, and Synthetic

- Real-time pricing - Fetches current pricing from LiteLLM with 1-hour disk cache; automatic OpenRouter fallback and Cursor model pricing for newly released models

- Detailed breakdowns - Input, output, cache read/write, and reasoning token tracking

- Native Rust core - All parsing and aggregation done in Rust for 10x faster processing

- Web visualization - Interactive contribution graph with 2D and 3D views

- Flexible filtering - Filter by platform, date range, or year

- Export to JSON - Generate data for external visualization tools

- Social Platform - Share your usage, compete on leaderboards, and view public profiles

Installation

Quick Start

# Run directly with npx

npx tokscale@latest

# Or use bunx

bunx tokscale@latest

# Or use Deno without installing an alias

deno x npm:tokscale@latest

# Light mode (table rendering only)

npx tokscale@latest --light

That's it! This gives you the full interactive TUI experience with zero setup.

Package Structure:

tokscaleis an alias package (likeswc) that installs@tokscale/cli. Both install the same CLI with the native Rust core (@tokscale/core) included.

Prerequisites

Development Setup

For local development or building from source:

# Clone the repository

git clone https://github.com/junhoyeo/tokscale.git

cd tokscale

# Install Bun (if not already installed)

curl -fsSL https://bun.sh/install | bash

# Install dependencies

bun install

# Run the CLI in development mode

bun run cli

Note:

bun run cliis for local development. When installed viabunx tokscale, the command runs directly. The Usage section below shows the installed binary commands.

Building the Native Module

The native Rust module is required for CLI operation. It provides ~10x faster processing through parallel file scanning and SIMD JSON parsing:

# Build the native core (run from repository root)

bun run build:core

Note: Native binaries are pre-built and included when you install via

bunx tokscale@latest. Building from source is only needed for local development.

Usage

Basic Commands

# Launch interactive TUI (default)

tokscale

# Launch TUI with specific tab

tokscale models # Models tab

tokscale monthly # Daily view (shows daily breakdown)

tokscale hourly # Hourly tab

# Use legacy CLI table output

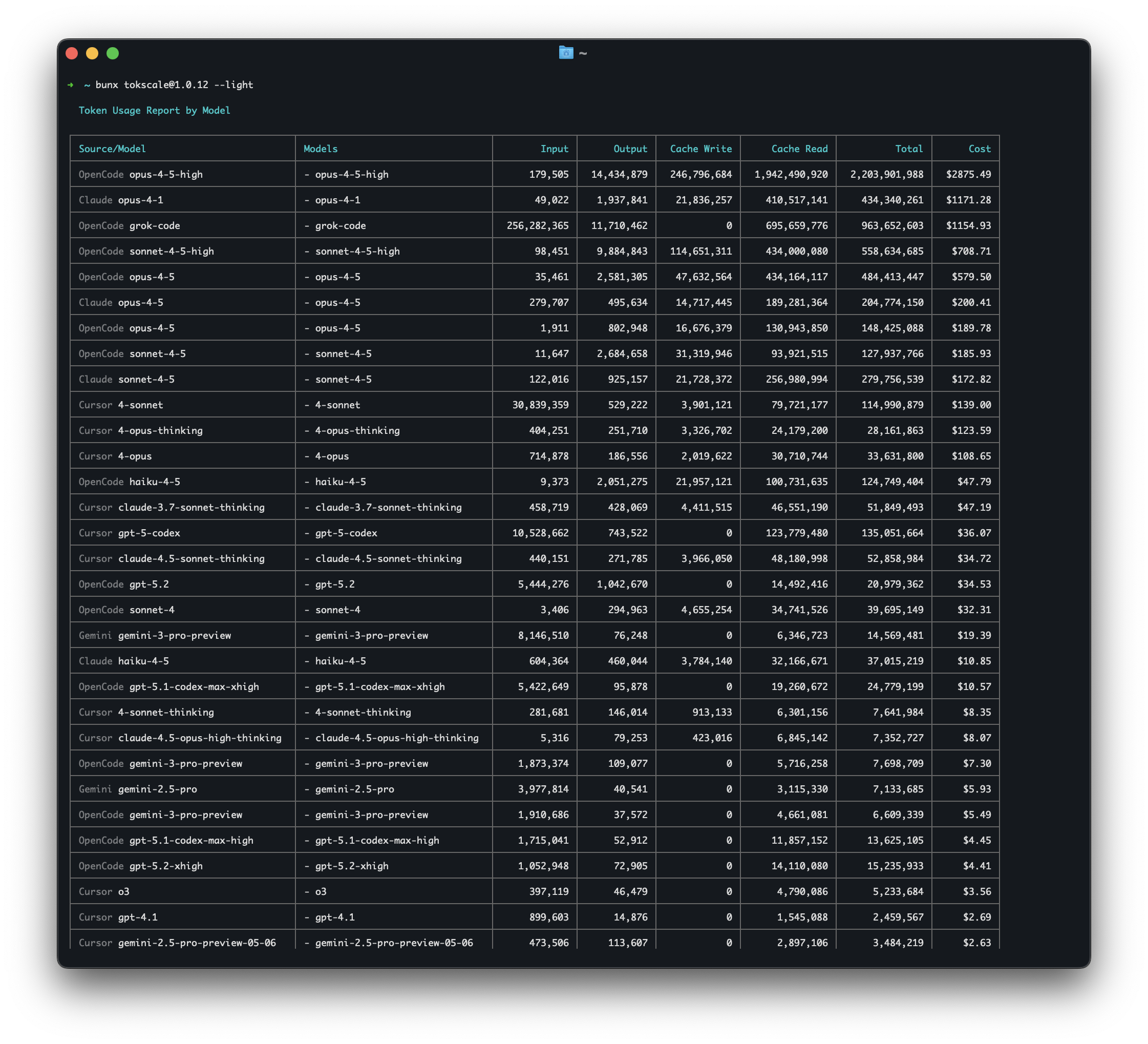

tokscale --light

tokscale models --light

# Launch TUI explicitly

tokscale tui

# Export contribution graph data as JSON

tokscale graph --output data.json

# Output data as JSON (for scripting/automation)

tokscale --json # Default models view as JSON

tokscale models --json # Models breakdown as JSON

tokscale monthly --json # Monthly breakdown as JSON

tokscale models --json > report.json # Save to file

TUI Features

The interactive TUI mode provides:

- 8 Views: Overview (chart + top models), Usage (subscription quotas), Models, Daily, Hourly, Stats (contribution graph), Agents. A per-minute view (Minutely) is hidden by default and can be enabled with

minutelyTabEnabledinsettings.json— see Configuration - Keyboard Navigation:

←/→/Tab/BackTab: Switch views↑/↓orHome/End: Navigate listsEnter: Open daily detail (Daily tab) / select graph cell (Stats tab)EscorBackspace: Close dialog or exit detail viewc/d/t: Sort by cost/date/tokensj: Jump to todays: Open source picker dialogg: Open group-by picker dialog (model, client+model, client+provider+model, workspace+model, session+model, client+session+model)h: Toggle Daily/Hourly chart granularity (Overview tab)v: Toggle Table/Profile view (Hourly tab)y: Copy selected row to clipboardp: Cycle through 9 color themesr: Refresh data;Shift+Rtoggles auto-refresh;+/-adjusts intervale: Export to JSONqorCtrl+C: Quit

- Mouse Support: Click tabs, buttons, and filters

- Themes: Green, Halloween, Teal, Blue, Pink, Purple, Orange, Monochrome, YlGnBu

- Settings Persistence: Preferences saved to

~/.config/tokscale/settings.json(see Configuration)

Group-By Strategies

Press g in the TUI or use --group-by in --light/--json mode to control how model rows are aggregated:

| Strategy | Flag | TUI Default | Effect |

|---|---|---|---|

| Model | --group-by model |

✅ | One row per model — merges all clients and providers |

| Client + Model | --group-by client,model |

One row per client-model pair | |

| Client + Provider + Model | --group-by client,provider,model |

Most granular — no merging | |

| Workspace + Model | --group-by workspace,model |

Group local usage by workspace key, then model | |

| Session + Model | --group-by session,model |

One row per session_id and model — attribute cost to a specific agent-CLI session |

|

| Client + Session + Model | --group-by client,session,model |

One row per client, session, and model — useful for multi-agent runners that join on session_id |

--group-by model (most consolidated)

| Clients | Providers | Model | Cost |

|---|---|---|---|

| OpenCode, Claude, Amp | github-copilot, anthropic | claude-opus-4-5 | $2,424 |

| OpenCode, Claude | anthropic, github-copilot | claude-sonnet-4-5 | $1,332 |

--group-by client,model (CLI default)

| Client | Provider | Model | Cost |

|---|---|---|---|

| OpenCode | github-copilot, anthropic | claude-opus-4-5 | $1,368 |

| Claude | anthropic | claude-opus-4-5 | $970 |

--group-by client,provider,model (most granular)

| Client | Provider | Model | Cost |

|---|---|---|---|

| OpenCode | github-copilot | claude-opus-4-5 | $1,200 |

| OpenCode | anthropic | claude-opus-4-5 | $168 |

| Claude | anthropic | claude-opus-4-5 | $970 |

--group-by session,model (per-session cost attribution)

tokscale models --json --group-by session,model emits one entry per (session_id, model). Each entry includes a top-level sessionId field so downstream tools (e.g. multi-agent IDEs) can join cost data back to a specific agent-CLI session:

{

"groupBy": "session,model",

"entries": [

{

"sessionId": "019e1e27-af49-7cd1-89b7-7bad1c3f3be2",

"client": "codex",

"provider": "openai",

"model": "gpt-5",

"input": 25251,

"output": 47,

"cacheRead": 1920,

"cacheWrite": 0,

"reasoning": 40,

"messageCount": 12,

"cost": 0.0123

}

]

}

Use --group-by client,session,model when you also need the client name on every row (one spawn across all 20+ supported CLIs at once).

Filtering by Platform

Use --client (short -c) to scope reports to one or more clients. The flag is repeatable, accepts comma-separated values, and works with every report command:

# Show only OpenCode usage

tokscale --client opencode

# Comma-separated: combine multiple clients

tokscale --client opencode,claude

# Repeated: same effect, useful with shell aliases

tokscale -c opencode -c claude

# Cursor IDE uses Tokscale's API cache; run login + sync first

tokscale --client cursor

# Synthetic (synthetic.new) is detected from other agent sessions

tokscale --client synthetic

# Combine with other filters

tokscale --client opencode,claude --week --json

Possible values: opencode, claude, codex, copilot, gemini, cursor, amp, codebuff, droid, openclaw, hermes, pi, kimi, qwen, roocode, kilocode, kilo, mux, crush, goose, antigravity, zed, kiro, trae, synthetic.

Deprecation notice: The legacy single-client flags (

--opencode,--claude,--codex, etc.) still work for backward compatibility but are hidden from--helpand will be removed in the next major release. Migrate to--clientwhenever possible. Running tokscale in an interactive terminal will print a one-line warning when a legacy flag is used.

Date Filtering

Date filters work across all commands that generate reports (tokscale, tokscale models, tokscale monthly, tokscale graph):

# Quick date shortcuts

tokscale --today # Today only

tokscale --week # Last 7 days

tokscale --month # Current calendar month

# Custom date range (inclusive, local timezone)

tokscale --since 2024-01-01 --until 2024-12-31

# Filter by year

tokscale --year 2024

# Combine with other options

tokscale models --week --client claude --json

tokscale monthly --month --benchmark

Note: Date filters use your local timezone. Both

--sinceand--untilare inclusive. v2.2.0 note: Session active-time daily buckets also use your local timezone, so users outside UTC may see active-time dates align with local token/cost report days instead of UTC day boundaries.

Pricing Lookup

Look up real-time pricing for any model:

# Look up model pricing

tokscale pricing "claude-3-5-sonnet-20241022"

tokscale pricing "gpt-4o"

tokscale pricing "grok-code"

# Force specific provider source

tokscale pricing "grok-code" --provider openrouter

tokscale pricing "claude-3-5-sonnet" --provider litellm

# Inspect custom pricing overrides

tokscale pricing list-overrides

Lookup Strategy:

The pricing lookup uses a multi-step resolution strategy:

- Custom Pricing Overrides - Exact user-defined entries from

~/.config/tokscale/custom-pricing.json - Exact Match - Direct lookup in LiteLLM/OpenRouter databases

- Alias Resolution - Resolves friendly names (e.g.,

big-pickle→glm-4.7) - Tier Suffix Stripping - Removes quality tiers (

gpt-5.2-xhigh→gpt-5.2) - Version Normalization - Handles version formats (

claude-3-5-sonnet↔claude-3.5-sonnet) - Provider Prefix Matching - Tries common prefixes (

anthropic/,openai/, etc.) - Cursor Model Pricing - Hardcoded pricing for models not yet in LiteLLM/OpenRouter (e.g.,

gpt-5.3-codex) - Fuzzy Matching - Word-boundary matching for partial model names

Custom Pricing Overrides

Create custom-pricing.json in Tokscale's config directory (~/.config/tokscale/custom-pricing.json on macOS/Linux by default; the same directory resolved by TOKSCALE_CONFIG_DIR when set) to override prices for model IDs that upstream pricing databases do not yet cover correctly.

{

"$schema": "https://tokscale.dev/custom-pricing.schema.json",

"models": {

"accounts/fireworks/routers/kimi-k2p6-turbo": {

"input_cost_per_million_tokens": 2.00,

"output_cost_per_million_tokens": 8.00,

"cache_read_input_token_cost_per_million_tokens": 0.30,

"source": "https://docs.fireworks.ai/serverless/pricing",

"notes": "Fireworks Kimi K2.6 Turbo (preview)"

},

"accounts/fireworks/models/kimi-k2p6": {

"input_cost_per_million_tokens": 0.95,

"output_cost_per_million_tokens": 4.00,

"cache_read_input_token_cost_per_million_tokens": 0.16

},

"kimi-k2p6-turbo": {

"input_cost_per_million_tokens": 2.00,

"output_cost_per_million_tokens": 8.00

}

}

}

Override prices are entered in dollars per million tokens, matching how most API providers publish pricing; Tokscale converts them to per-token rates internally. At least one of input_cost_per_million_tokens or output_cost_per_million_tokens must be present and positive, and cache-read/cache-creation fields are optional. LiteLLM-style per-token field names such as input_cost_per_token, output_cost_per_token, and cache_read_input_token_cost are also accepted for copy/paste compatibility, but the per-million names are the recommended user-facing form. To omit a tier or cache price, leave the field out; negative or non-finite values are treated as invalid and the whole model entry is skipped so typos do not silently alter accounting. Optional source and notes fields are ignored by Tokscale and can be used for your own bookkeeping.

Overrides are exact-only and case-insensitive. Tokscale checks the raw model ID first, then the existing synthetic /models/ normalization, then falls through to LiteLLM, OpenRouter, Cursor pricing, and fuzzy matching if no override matches. Raw exact matches beat normalized exact matches, so accounts/fireworks/routers/kimi-k2p6-turbo can override one gateway-specific model while kimi-k2p6-turbo can cover normalized /models/ paths. Overrides are loaded once at startup; restart the command after editing the file. This is the recommended local fix for wrong-model pricing bugs while waiting on upstream LiteLLM pricing updates.

Provider Preference:

When multiple matches exist, original model creators are preferred over resellers:

| Preferred (Original) | Deprioritized (Reseller) |

|---|---|

xai/ (Grok) |

azure_ai/ |

anthropic/ (Claude) |

bedrock/ |

openai/ (GPT) |

vertex_ai/ |

google/ (Gemini) |

together_ai/ |

meta-llama/ |

fireworks_ai/ |

Example: grok-code matches xai/grok-code-fast-1 ($0.20/$1.50) instead of azure_ai/grok-code-fast-1 ($3.50/$17.50).

Social

# Login to Tokscale (opens browser for GitHub auth)

tokscale login

# Save an existing Tokscale API token without browser auth

tokscale login --token tt_xxx

# Check who you're logged in as

tokscale whoami

# Display your saved API token as a QR code (useful for sharing to another device)

# Encodes {"token":"tt_xxx","username":"..."} — scan with any QR reader

tokscale qr

# Submit your usage data to the leaderboard

tokscale submit

# Submit in CI/headless environments without writing credentials

# Precedence: TOKSCALE_API_TOKEN env > saved credentials file (~/.config/tokscale/credentials.json).

# When the env var is set, the saved file is ignored for that invocation.

TOKSCALE_API_TOKEN=tt_xxx tokscale submit

# Revoke a token: visit Settings > API Tokens on the leaderboard site

# (https://tokscale.com/settings) and click "Revoke" on the token row.

# Revocation takes effect immediately — subsequent requests with that

# token will get HTTP 401 "Invalid API token".

# Submit with filters

tokscale submit --client opencode,claude --since 2024-01-01

# Preview what would be submitted (dry run)

tokscale submit --dry-run

# Logout

tokscale logout

Cursor IDE Commands

Cursor IDE support uses Cursor's web API export, cached by Tokscale at ~/.config/tokscale/cursor-cache/usage*.csv. Tokscale does not parse local Cursor Agent CLI state under ~/.cursor.

Setup:

- Open https://www.cursor.com/settings in your browser and sign in.

- Copy the

WorkosCursorSessionTokencookie value:- Network tab: make any request to

cursor.com/api/*, then copy the value afterWorkosCursorSessionToken=from theCookierequest header. - Application tab: open Cookies ->

https://www.cursor.com, then copy theWorkosCursorSessionTokenvalue.

- Network tab: make any request to

- Run

tokscale cursor login --name workand paste the token. - Run

tokscale cursor syncto populate~/.config/tokscale/cursor-cache/usage.csv. - Run

tokscale --client cursoror any report command.

Treat the session token like a password. It is stored locally in ~/.config/tokscale/cursor-credentials.json.

# Login to Cursor (requires session token from browser)

# --name is optional; it just helps you identify accounts later

tokscale cursor login --name work

# Check Cursor authentication status and session validity

tokscale cursor status

# List saved Cursor accounts

tokscale cursor accounts

# Manually refresh cached Cursor usage

tokscale cursor sync

# Switch active account (controls which account syncs to cursor-cache/usage.csv)

tokscale cursor switch work

# Logout from a specific account (keeps history; excludes it from aggregation)

tokscale cursor logout --name work

# Logout and delete cached usage for that account

tokscale cursor logout --name work --purge-cache

# Logout from all Cursor accounts (keeps history; excludes from aggregation)

tokscale cursor logout --all

# Logout from all accounts and delete cached usage

tokscale cursor logout --all --purge-cache

By default, Tokscale aggregates usage across all saved Cursor accounts by reading cursor-cache/usage*.csv. The active account syncs to usage.csv; additional accounts sync to usage.<account>.csv.

When you log out, Tokscale moves cached usage to cursor-cache/archive/ so it is no longer aggregated. Use --purge-cache to delete cached usage instead.

Antigravity Commands

Antigravity sync currently works on macOS and Linux only. The Antigravity-enabled editor must be running and its local language server available; tokscale reads usage from that local language server and caches normalized artifacts locally.

# Check whether tokscale can see running Antigravity language servers

tokscale antigravity status

# Sync usage from local Antigravity language servers into tokscale's cache

tokscale antigravity sync

# Delete the cached Antigravity artifacts

tokscale antigravity purge-cache

Cache location: ~/.config/tokscale/antigravity-cache/

How it works: tokscale antigravity sync discovers local Antigravity session candidates, fetches confirmed usage data from the local language server RPC, and stores normalized JSONL artifacts for tokscale-core to parse later. Run sync before reports if you want the freshest Antigravity data.

Trae Commands

Trae (ByteDance's AI IDE) ships in two international product lines — Trae IDE and Trae Solo. They share the same account-level usage data (same backend, same JWT), so tokscale reports them as a single trae client. You can install either or both desktop apps; tokscale auto-discovers credentials from whichever is present.

Credentials are identified per desktop app via --variant:

--variant ide— credentials from Trae IDE (~/Library/Application Support/Trae/)--variant solo— credentials from Trae Solo (~/Library/Application Support/TRAE SOLO/)

tokscale trae sync calls the official query_user_usage_group_by_session API exactly once per run (regardless of how many desktop apps are installed) and persists the raw JSON to a local cache.

# Log in (auto-detects credentials from any installed Trae desktop client)

tokscale trae login

# Manual JWT entry (for environments where auto-detect can't find storage.json,

# e.g. Linux/Windows or a headless server). Open https://www.trae.ai/account-setting#usage

# in your browser, then F12 → Network → filter `query_user_usage` and copy the

# `Authorization` header value.

tokscale trae login --manual --variant solo

# Show which variants have cached credentials

tokscale trae status

# Sync usage (uses the first available credential source)

tokscale trae sync --since 30

# Forget cached credentials for one variant

tokscale trae logout --variant solo

Cache location: ~/.config/tokscale/trae-cache/

How it works: tokscale either decrypts the desktop client's iCubeAuthInfo://* blob (globalStorage/storage.json) to recover a JWT, or accepts one pasted via --manual. It then calls POST /trae/api/v1/pay/query_user_usage_group_by_session paginated and stores the raw JSON. Run sync before reports if you want the freshest Trae data.

Note on pricing: Trae cost figures are vendor-reported — tokscale surfaces the

dollar_floatvalue returned by Trae's own API rather than recomputing cost from token counts through tokscale's pricing engine. Numbers will match what you see ontrae.ai/account-setting#usage, not what tokscale would otherwise calculate for the same usage.

China variants: The China editions (

trae.com.cn) are intentionally not supported. The CN backend does not expose a session-level usage query API. Trae CN / Trae Solo CN support will be added once an official endpoint becomes available upstream.

Subscription Usage

Tokscale can fetch and display your real-time subscription quota across AI providers. This shows how much of your plan you've used and when limits reset.

# Show subscription usage for all detected providers

tokscale usage

# Output as JSON (for scripting)

tokscale usage --json

# Lightweight terminal output (no TUI)

tokscale usage --light

In the TUI, navigate to the Usage tab to see subscription data. Press u or r to refresh.

Note: Subscription quotas and balances are vendor-reported — tokscale calls each provider's own quota endpoint and surfaces the response verbatim. Numbers reflect what the provider reports (which is also what shows up in their official dashboards) and are not independently verified against tokscale's own usage tracking.

Supported Providers

| Provider | Auth Method | Metrics | Setup |

|---|---|---|---|

| Claude | OAuth (credentials file or macOS Keychain) | Session (5hr), Weekly, Opus quotas | Run claude to log in |

| Codex (OpenAI) | OAuth (~/.config/codex/auth.json or ~/.codex/auth.json) |

Session, Weekly quotas | Run codex to log in |

| Z.ai | API key (env var) | Token limits, Web Searches | Set ZAI_API_KEY or GLM_API_KEY |

| Amp | API key (~/.local/share/amp/secrets.json) |

Free tier balance, Credits | Run amp to log in |

| GitHub Copilot | GitHub token (keychain or ~/.config/gh/hosts.yml) |

Premium interactions, Chat quotas | Run gh auth login |

| Kimi | OAuth (~/.kimi/credentials/kimi-code.json) |

Session, Weekly quotas | Run kimi to log in |

| MiniMax | API key (env var) | Prompt quotas per model | Set MINIMAX_API_KEY or MINIMAX_API_TOKEN |

Providers are auto-detected — only those with valid credentials are shown. If a provider is missing, ensure you've logged in or set the required environment variable.

Example Output

╭──────────────────────────────────────────────────────────╮

│ Session 85% left [=========---] resets in 2h 15m │

│ Weekly 72% left [========----] resets Fri 3pm │

│ Plan Max 20x │

╰──────────────────────────────────────────────────────────╯

╭──────────────────────────────────────────────────────────╮

│ Session 40% left [=====-------] resets in 4h 30m │

│ Weekly 90% left [==========--] resets Mon 12am │

│ Account user@example.com │

│ Plan Pro │

╰──────────────────────────────────────────────────────────╯

Example Output (--light version)

Configuration

Tokscale stores settings in ~/.config/tokscale/settings.json:

{

"colorPalette": "blue",

"includeUnusedModels": false,

"defaultClients": ["opencode", "claude"],

"scanner": {

"extraScanPaths": {

"codex": [

"/Users/me/workspace/project-a/.codex/sessions",

"/Users/me/workspace/project-b/.codex/archived_sessions"

],

"hermes": [

"/Users/me/.hermes/profiles/director_planning",

"/Users/me/.hermes/profiles/research/state.db"

]

}

}

}

| Setting | Type | Default | Description |

|---|---|---|---|

colorPalette |

string | "blue" |

TUI color theme (green, halloween, teal, blue, pink, purple, orange, monochrome, ylgnbu) |

includeUnusedModels |

boolean | false |

Show models with zero tokens in reports |

autoRefreshEnabled |

boolean | false |

Enable auto-refresh in TUI |

autoRefreshMs |

number | 60000 |

Auto-refresh interval (30000-3600000ms) |

nativeTimeoutMs |

number | 300000 |

Maximum time for native subprocess processing (5000-3600000ms) |

defaultClients |

string[] | [] |

Client filter applied when no --client/-c flag is passed. Accepts the same ids as --client (e.g. ["opencode", "claude", "synthetic"]). Unknown ids are silently dropped. CLI flags always override this list completely — no merging. |

light.writeCache |

boolean | false |

When true, tokscale --light overwrites the TUI cache atomically after rendering. CLI flags --write-cache / --no-write-cache override per-invocation. |

minutelyTabEnabled |

boolean | false |

Show the per-minute Minutely tab in the TUI and aggregate per-minute usage during data loading. Default-off because minute-granularity is a niche/diagnostic view for most users and the per-minute bucketing has a non-trivial cost on large datasets. |

scanner.extraScanPaths |

object | {} |

Additional per-client scan roots for sessions outside Tokscale's default home-root locations |

Use scanner.extraScanPaths for persistent extra roots such as project-level .codex directories, imported Gemini/OpenClaw histories, or Hermes profile databases. Hermes entries may point at a profile directory containing state.db or directly at a state.db file. Tokscale merges these paths with the default scan roots on every run and deduplicates overlapping roots by canonical path.

Use defaultClients to pin a personal default — for example, set it to ["opencode", "claude"] if those are the only clients you use, and tokscale (with no flags) will scope every report to them automatically. Pass --client on the command line to override for a single run.

Enabling the Minutely tab

The Minutely tab shows a per-minute breakdown of token usage and is most useful for diagnosing burst patterns, debugging a single session, or watching activity in near-real-time alongside autoRefreshEnabled. It is hidden by default because the per-minute aggregation runs over every parsed message during data loading, which adds RAM and CPU cost that most users do not need.

To enable it, set minutelyTabEnabled to true in ~/.config/tokscale/settings.json:

{

"minutelyTabEnabled": true

}

After restart, the Minutely tab appears between Hourly and Stats in the tab strip, and Tab / BackTab / Left / Right navigation cycles through it. Set the flag back to false to hide the tab and skip the aggregation again.

Cache directory layout

The regenerable CLI/TUI/pricing/Wrapped caches now live under ~/.config/tokscale/cache/ (or ${TOKSCALE_CONFIG_DIR}/cache/ when overridden). Integration sync artifacts remain in client-specific cache roots such as ~/.config/tokscale/antigravity-cache/ and ~/.config/tokscale/trae-cache/:

tui-data-cache.json— TUI startup cachesource-message-cache.bin+source-message-cache.lock— source-message cache + lock filepricing-litellm.json/pricing-openrouter.json— pricing cachesopencode-migration.json— OpenCode migration recordfonts/andimages/— Wrapped asset caches

It is safe to delete this directory. Tokscale will recreate and repopulate it on demand.

Environment Variables

Environment variables override config file values. For CI/CD or one-off use:

| Variable | Default | Description |

|---|---|---|

TOKSCALE_NATIVE_TIMEOUT_MS |

300000 (5 min) |

Overrides nativeTimeoutMs config |

TOKSCALE_API_TOKEN |

unset | Tokscale personal API token for non-interactive submit and delete-submitted-data runs. Create one from Settings > API Tokens or save it locally with tokscale login --token tt_xxx. |

TOKSCALE_EXTRA_DIRS |

unset | One-off extra session roots as client:/abs/path,client:/abs/path |

TOKSCALE_CONFIG_DIR |

unset | Overrides the config directory root (where settings.json, star-cache.json, cache/, antigravity-cache/, and trae-cache/ live). Absolute path recommended; relative paths resolve against the process CWD. Useful for CI sandboxes or pinning a non-default location. When set, tokscale will not fall back to the legacy macOS ~/Library/Application Support/tokscale/ path. |

# Example: Increase timeout for very large datasets

TOKSCALE_NATIVE_TIMEOUT_MS=600000 tokscale graph --output data.json

# Example: one-off extra scan roots

TOKSCALE_EXTRA_DIRS='codex:/Users/me/workspace/project-a/.codex/sessions,gemini:/Users/me/imports/imac/gemini/tmp' tokscale

# Example: submit from CI without an interactive browser login

TOKSCALE_API_TOKEN=tt_xxx tokscale submit

Note: For persistent extra roots, prefer

scanner.extraScanPathsin~/.config/tokscale/settings.json.TOKSCALE_EXTRA_DIRSis best for one-off overrides or CI/CD.

Headless Mode

Tokscale can aggregate token usage from Codex CLI headless outputs for automation, CI/CD pipelines, and batch processing.

What is headless mode?

When you run Codex CLI with JSON output flags (e.g., codex exec --json), it outputs usage data to stdout instead of storing it in its regular session directories. Headless mode allows you to capture and track this usage.

Storage location: ~/.config/tokscale/headless/

On macOS, Tokscale also scans ~/Library/Application Support/tokscale/headless/ when TOKSCALE_HEADLESS_DIR is not set.

Tokscale automatically scans this directory structure:

~/.config/tokscale/headless/

└── codex/ # Codex CLI JSONL outputs

Environment variable: Set TOKSCALE_HEADLESS_DIR to customize the headless log directory:

export TOKSCALE_HEADLESS_DIR="$HOME/my-custom-logs"

Recommended (automatic capture):

| Tool | Command Example |

|---|---|

| Codex CLI | tokscale headless codex exec -m gpt-5 "implement feature" |

Manual redirect (optional):

| Tool | Command Example |

|---|---|

| Codex CLI | codex exec --json "implement feature" > ~/.config/tokscale/headless/codex/ci-run.jsonl |

Diagnostics:

# Show scan locations and headless counts

tokscale sources

tokscale sources --json

CI/CD integration example:

# In your GitHub Actions workflow

- name: Run AI automation

run: |

mkdir -p ~/.config/tokscale/headless/codex

codex exec --json "review code changes" \

> ~/.config/tokscale/headless/codex/pr-${{ github.event.pull_request.number }}.jsonl

# Later, track usage

- name: Report token usage

run: tokscale --json

Note: Headless capture is supported for Codex CLI only. If you run Codex directly, redirect stdout to the headless directory as shown above.

Frontend Visualization

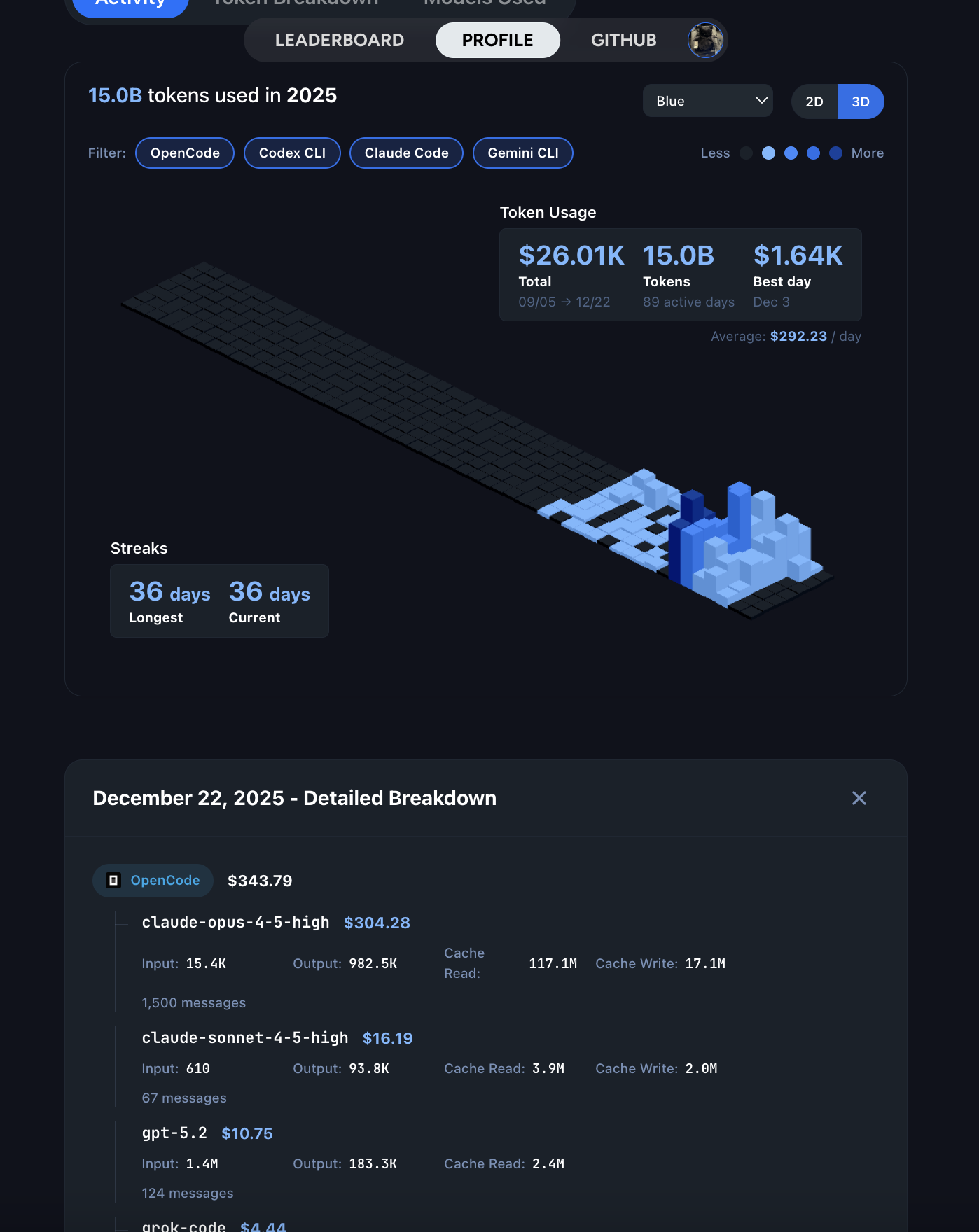

The frontend provides a GitHub-style contribution graph visualization:

Features

- 2D View: Classic GitHub contribution calendar

- 3D View: Isometric 3D contribution graph with height based on token usage

- Multiple color palettes: GitHub, GitLab, Halloween, Winter, and more

- 3-way theme toggle: Light / Dark / System (follows OS preference)

- GitHub Primer design: Uses GitHub's official color system

- Interactive tooltips: Hover for detailed daily breakdowns

- Day breakdown panel: Click to see per-source and per-model details

- Year filtering: Navigate between years

- Source filtering: Filter by platform (OpenCode, Claude, Codex, Copilot, Cursor, Gemini, Amp, Codebuff, Droid, OpenClaw, Hermes Agent, Pi, Kimi, Qwen, Roo Code, Kilo, Mux, Kilo CLI, Crush, Goose, Antigravity, Zed, Kiro, Trae, Synthetic)

- Stats panel: Total cost, tokens, active days, streaks

- FOUC prevention: Theme applied before React hydrates (no flash)

Running the Frontend

cd packages/frontend

bun install

bun run dev

Open http://localhost:3000 to access the social platform.

Social Platform

Tokscale includes a social platform where you can share your usage data and compete with other developers.

Features

- Leaderboard - See who's using the most tokens across all platforms

- User Profiles - Public profiles with contribution graphs and statistics

- Period Filtering - View stats for all time, this month, or this week

- GitHub Integration - Login with your GitHub account

- Local Viewer - View your data privately without submitting

GitHub Profile Embed Widget

You can embed your public Tokscale stats directly in your GitHub profile README:

[](https://tokscale.ai/u/<username>)

Replace <username> with your GitHub username. With no query parameters this

renders the default classic card; append any of the parameters below to

customize the design.

| Parameter | Values | Effect |

|---|---|---|

template |

classic (default) · minimal · terminal · graph · orbit · vitals · blueprint · receipt |

Card design |

color |

blue · green · teal · purple · pink · orange · monochrome · halloween · YlGnBu |

Accent color and contribution-graph palette |

theme |

dark (default) · light |

Light or dark card |

sort |

tokens (default) · cost |

Which leaderboard the rank is taken from |

tokens, cost |

compact · full |

Number format, set independently — 20.9B vs 20,941,000,000 |

rank |

plain (default, #134) · percent (top 12%) · total (#134 / 1,174) |

How the leaderboard rank is shown |

graph |

1 to append the contribution graph (off by default) |

Supported by classic, minimal, terminal, orbit, blueprint, receipt |

compact |

1 for the compact layout |

classic only |

Examples:

GitHub Profile Badge

You can also use a shields.io-style badge for a more compact display:

- Replace

<username>with your GitHub username - Optional query params:

metric=tokens(default),metric=cost, ormetric=rankstyle=flat(default) orstyle=flat-squaresort=tokens(default) orsort=costto control ranking basiscompact=1to use compact number notation (e.g.,1.2M,$3.4K)label=<text>to override the left-side labelcolor=<hex>to override the right-side color (e.g.,color=ff5733)

- Examples:

https://tokscale.ai/api/badge/<username>/svg?metric=cost&compact=1https://tokscale.ai/api/badge/<username>/svg?metric=rank&sort=cost&style=flat-square

Getting Started

- Login - Run

tokscale loginto authenticate via GitHub, or create an API token in Settings for CI/headless use - Submit - Run

tokscale submitto upload your usage data - View - Visit the web platform to see your profile and the leaderboard

Data Validation

Submitted data goes through Level 1 validation:

- Mathematical consistency (totals match, no negatives)

- No future dates

- Required fields present

- Duplicate detection

Wrapped 2025

Generate a beautiful year-in-review image summarizing your AI coding assistant usage—inspired by Spotify Wrapped.

bunx tokscale@latest wrapped |

bunx tokscale@latest wrapped --clients |

bunx tokscale@latest wrapped --agents --disable-pinned |

|---|---|---|

|

|

|

Command

# Generate wrapped image for current year

tokscale wrapped

# Generate for a specific year

tokscale wrapped --year 2025

What's Included

The generated image includes:

- Total Tokens - Your total token consumption for the year

- Top Models - Your 3 most-used AI models ranked by cost

- Top Clients - Your 3 most-used platforms (OpenCode, Claude Code, Cursor, etc.)

- Messages - Total number of AI interactions

- Active Days - Days with at least one AI interaction

- Cost - Estimated total cost based on LiteLLM pricing

- Streak - Your longest consecutive streak of active days

- Contribution Graph - A visual heatmap of your yearly activity

The generated PNG is optimized for sharing on social media. Share your coding journey with the community!

Development

Quick setup: If you just want to get started quickly, see Development Setup in the Installation section above.

Prerequisites

# Bun (required)

bun --version

# Rust (for native module)

rustc --version

cargo --version

How to Run

After following the Development Setup, you can:

# Build n

---

*README truncated. [Continue reading on GitHub](https://github.com/junhoyeo/tokscale#readme)*