The state of Hermes Agent — April 2026

A community report on the first six weeks of Nous Research's self-improving AI agent.

TL;DR

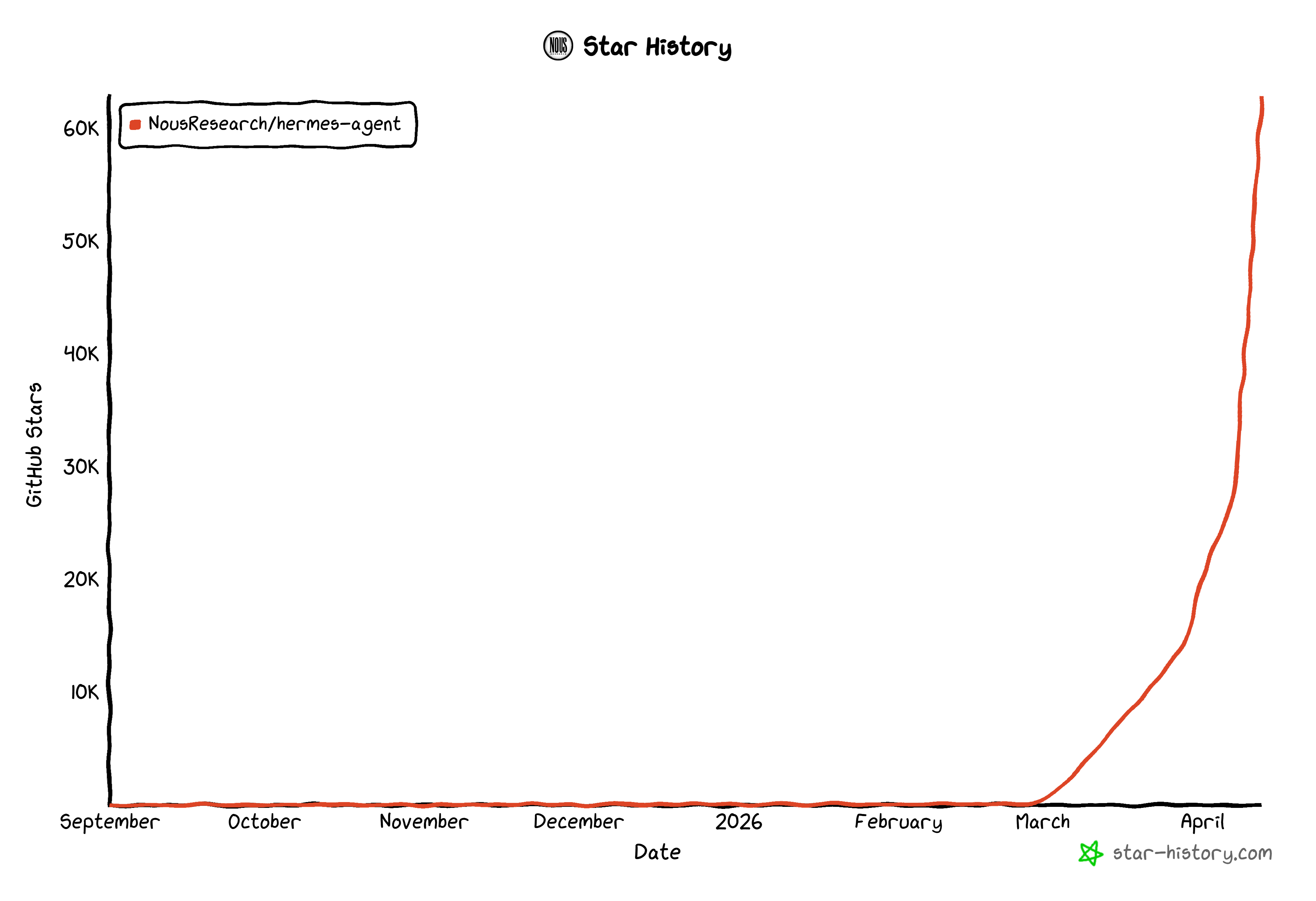

Six weeks ago, Nous Research quietly released Hermes Agent — an open-source AI agent built around a single contrarian premise: what if your AI got smarter the more you used it, instead of resetting at the end of every session? The community noticed. The repo went from 0 to 57,200 GitHub stars in six weeks, growing faster than OpenClaw at the same stage.

But star counts only tell part of the story. The real signal is what's been built around it. In just six weeks, the community has shipped:

- 3 official Nous Research extensions (autonovel, hermes-paperclip-adapter, hermes-agent-self-evolution)

- 17 community skill libraries (including the 4,132-star Anthropic Cybersecurity Skills collection mapped to MITRE ATT&CK)

- 8 external memory providers (Honcho, Mem0, Hindsight, Supermemory, and others)

- 9 multi-agent orchestration frameworks (mission-control alone has 3,875 stars)

- 7 deployment templates spanning Docker, Nix, systemd, and managed cloud

- 80+ quality-filtered repos in total, growing at roughly 5,000 GitHub stars per week ecosystem-wide

This report unpacks how a model-research lab from upstate New York turned an open-source release into a movement, what Hermes does differently from OpenClaw and Claude Code, and what to watch for in Q2.

1. What Hermes Agent actually is

Hermes Agent is an autonomous AI agent that lives on a server (or your laptop, or a $5 VPS) and gets more capable over time. Three things make it different from every other AI agent framework released in the last two years:

1. A built-in learning loop. When Hermes completes a complex task — say, debugging a microservice or refactoring a Rust crate — it can synthesize that experience into a permanent skill document. These skills follow the agentskills.io open standard: structured Markdown with metadata, written in natural language, callable as slash commands. Next time the agent encounters something similar, it doesn't start from zero. It loads the relevant skill and gets to work.

2. Persistent multi-level memory. Hermes maintains a bounded MEMORY.md (the agent's notes) and USER.md (user profile) that always live in the system prompt. Below that, every session is stored in SQLite with FTS5 full-text search, so the agent can recall any past conversation. Above that, eight pluggable external memory providers (Honcho, Mem0, Hindsight, Supermemory, RetainDB, ByteRover, OpenViking, Holographic) handle long-term semantic memory and graph-based recall. This three-layer architecture is the defining technical decision of the project.

3. A unified gateway across 14 messaging platforms. A single Hermes instance can run as a Telegram bot, a Discord bot, a Slack app, a WhatsApp client, a Signal contact, an SMS endpoint, an Email auto-responder, a Matrix integration, a Mattermost bot, and more — all from the same daemon, with one shared session and one shared memory. You can start a conversation in CLI and continue it in Telegram, then ask it to send the result to your team in Slack.

The core repo is MIT-licensed Python (~9,200 lines in run_agent.py alone), supports 20+ LLM providers (OpenRouter, Nous Portal, Anthropic, OpenAI, Gemini, local Ollama, vLLM, etc.), and runs on six execution backends from local processes to Daytona cloud sandboxes.

2. The launch timeline

February 25, 2026: Nous Research drops Hermes Agent on GitHub with a simple tweet: "Meet Hermes Agent, the open source agent that grows with you." The launch post gets 557 likes — strong but not viral.

February 26-March 10: Tech press picks it up. MarkTechPost runs a piece framing it as a fix for "AI forgetfulness." Bitcoin News crosses the crypto/AI divide with an explainer. The Top AI Product blog calls it "the open-source AI agent that finally remembers everything."

March 11: Hits 22,000 GitHub stars and 242 contributors — this would have been a great six-month number for most projects.

March 15-25: The skill ecosystem explodes. Vercel Labs publishes their official vercel-labs/agent-skills library. Black Forest Labs (the FLUX image gen team) ships official Hermes-compatible image generation skills. Anthropic releases a 754-skill cybersecurity collection mapped to MITRE ATT&CK, NIST CSF 2.0, and D3FEND.

March 23: Nous Research ships v0.4.0 — what Teknium calls "the platform expansion release." 200+ bug fixes, 6 new messaging adapters (Signal, DingTalk, SMS, Mattermost, Matrix, Webhook), MCP server management with OAuth 2.1, an OpenAI-compatible API server, and gateway prompt caching. The release is built from 300 PRs in five days.

April 3: Anthropic blocks OpenClaw from accessing Claude Code subscriptions. The Hacker News thread reaches #1 with 1,064 points and 811 comments. Nous Research immediately tweets: "If you're having trouble with your lobster-themed agent since the recent update, try downloading Hermes Agent, then running 'hermes claw migrate'. We've been told this helps a lot." The cheeky migration tool tweet picks up 813 likes. Migration traffic spikes.

April 8: v0.8.0 ships with 209 PRs and 82 issue closures. Headline features: live model switching mid-session, free Xiaomi MiMo v2 Pro via Nous Portal, native Google AI Studio (Gemini) integration, Matrix tier-1 support, MCP OAuth 2.1 with PKCE plus OSV malware scanning, and the long-awaited notify_on_complete for background tasks. Manim skill ships the same week and goes viral after Shopify CEO Tobi Lütke uses it to generate a 3Blue1Brown-style math animation in one prompt.

April 11: 57,200+ GitHub stars, 7,572 forks, 274 contributors. The ecosystem map at hermesatlas.com now tracks 80+ community projects. Nous Portal partnerships announced with MiniMax (M2.7) and Xiaomi (free MiMo v2 Pro for two weeks). Paradigm + a16z confirmed as Nous Research's lead investors.

That's six weeks.

3. Growth in numbers

| Metric | As of April 11, 2026 |

|---|---|

| GitHub stars (core repo) | 57,200 |

| Forks | 7,572 |

| Contributors | 274+ |

| Issues closed | 800+ |

| Releases | 8 (v0.1 → v0.8) |

| Messaging platforms | 14 |

| Community ecosystem repos | 80+ |

| External memory providers | 8 |

| Total ecosystem stars | 90,750 |

Growth velocity: Roughly 9,500 stars per week on the core repo over the last 30 days. For context, OpenClaw (which had a multi-month head start) is currently growing at ~3,000 stars per week. Hermes Agent has flipped OpenClaw on weekly star growth.

Contributor profile: Teknium (Nous Research co-founder) leads the commit count with 179 PRs in v0.8 alone. Top community contributors include @SHL0MS, @alt-glitch, @benbarclay, @CharlieKerfoot, and @WAXLYY. Fourteen distinct contributors merged PRs in the v0.8 release window — a healthy bus factor for a six-week-old project.

International reach: Coverage has expanded well beyond English-language tech press. Chinese AI developer @wangray published a deep technical analysis of Hermes's 4-layer memory system that picked up 258 likes and 49,000 views. Japanese developers have shipped install tutorials. The Chinese AI community has noted Nous Research's Web3 connections (Paradigm + a16z) as a sign of credible long-term backing.

4. The three-way positioning: Hermes vs OpenClaw vs Claude Code

A fair comparison matters here, because the community keeps trying to frame this as a winner-takes-all competition, and that's not really what's happening.

| Dimension | Claude Code | OpenClaw | Hermes Agent |

|---|---|---|---|

| Core identity | Pair-programmer in your IDE | Operations platform for teams | Personal assistant that learns |

| Architecture | IDE-integrated | Gateway control plane (Node.js) | Runtime AIAgent loop (Python) |

| Memory model | Auto-notes to disk | Unbounded Markdown | Bounded curation + SQLite FTS5 + 8 provider plugins |

| Skills | Static, human-authored | Static (5,700+ on ClawHub) | Autonomously created + refined by the agent |

| Channels | IDE only | 22+ including iMessage, IRC, LINE | 14 including Matrix, DingTalk, Feishu |

| License | Proprietary | MIT | MIT |

| Stars | N/A (Anthropic product) | ~250,000 | 57,200 |

| Execution | Local sandbox | Local | Local + Docker + SSH + Modal + Daytona + Singularity |

Where each one wins:

- Claude Code wins for focused coding sessions in an IDE. It's the best in-editor pair programmer Anthropic could build, and it integrates deeply with VS Code, Zed, and JetBrains. If you want an AI that helps you write code in your editor, this is the answer.

- OpenClaw wins for team operations and channel breadth. With 22 channel integrations and a 5,700-skill marketplace, it's the most mature option for running a single agent across an entire company's communication surface. The gateway-first architecture is a strength for ops workflows.

- Hermes Agent wins for the learning loop and long-horizon work. If you want an agent that gets better at your specific workflows over time — that remembers your project's quirks, that builds reusable skills automatically, that you can trust to run cron jobs unsupervised — Hermes is currently the only credible option.

The community consensus, repeated across Reddit, X, Substack, and the Hermes Agent Discord: they're complementary, not competitive. A typical setup uses Claude Code for IDE coding, Hermes Agent for personal automation and learning, and OpenClaw or Slack-native bots for team-wide ops.

5. The architectural bet that defines Hermes

If you read just one section of this report, read this one. Hermes Agent's design embodies a specific bet about where AI agents are going, and that bet differs sharply from its competition.

The bet: Memory-bounded, deliberately curated agents will outperform unbounded "remember everything" agents in real-world use, because the constraint forces consolidation and consolidation produces better priors.

OpenClaw's memory is unbounded. You can dump anything into it. The agent reads everything every turn. The bet is that more context = better answers.

Hermes's memory is strictly bounded: 2,200 characters for MEMORY.md, 1,375 for USER.md. When you hit the limit, you can't just keep adding — you have to consolidate. The agent must explicitly decide what's worth remembering. This is a constraint that forces the agent to develop a working theory of what matters about you and your work, and then refine that theory as it gets new evidence.

It's the same bet that LLMs make about attention: you can't attend to everything, so you have to learn what to attend to. Hermes applies that bet at the memory layer.

The early evidence suggests it's working. Users consistently report that Hermes "feels different" after 2-3 weeks of use in a way that other agents don't. One r/LocalLLaMA user reported a 40% speedup on repeated research tasks after Hermes auto-generated three skill documents over two hours. Another user — quoted on X by Robert Scoble — said "my Hermes agent spooked me with a very specific detail from a setup I was discussing with it 3 weeks ago."

Whether this bet is correct is still an open question. But it's a real bet, and it's the first credible alternative to the "infinite context window" school of thought that has dominated AI agent design for the past two years.

6. Community sentiment

Tracking community sentiment across X, Reddit, Substack, Hacker News, and the Hermes Agent Discord (~4,000 builders), three themes dominate:

Positive:

- "The first agent harness that you immediately know was developed by people who have a vast knowledge" — @DataDeLaurier

- "Much less token hungry" than competitors — repeated across r/LocalLLaMA

- "Excellent right out of the box" with a "5-minute install" — repeated by multiple users

- "@NousResearch may have cooked with hermes agent" — @fujikanaeda (retweeted by Nous itself)

- "Hermes is definitely a better coder, id drop the claw altogether lol" — @teknium

Critical (acknowledged honestly):

- Some users report edge case issues with extended use; one well-known user (@ksubedi) returned to OpenClaw after testing

- Local model performance can be slower than running the model directly through LMStudio (one user reported 1-2 tokens/s through Hermes vs 45 tokens/s native)

- Setup, while generally praised, was called "more tedious than OpenClaw" by @chrysb in the most-liked critical post

The signal-to-noise ratio strongly favors Hermes. Out of dozens of posts I've reviewed, the negative ones are specific and technical (and generally being addressed in releases), while the positive ones tend to be more emotional and broader ("magical," "spooked me," "the future").

7. The ecosystem map

Here's the breakdown of what the community has built around Hermes Agent in six weeks. Numbers reflect star counts as of April 11, 2026.

| Category | Count | Top project (stars) |

|---|---|---|

| Core & Official | 6 | hermes-agent (57.2K) |

| Skills & Registries | 17 | Anthropic-Cybersecurity-Skills (4.1K) |

| Memory & Context | 6 | hindsight (8.4K) |

| Multi-Agent & Orchestration | 7 | mission-control (3.9K) |

| Workspaces & GUIs | 4 | hermes-workspace (830) |

| Plugins & Extensions | 6 | hermes-web-search-plus (21) |

| Deployment & Infra | 7 | llm-agents.nix (967) |

| Integrations & Bridges | 5 | hermes-android (38) |

| Developer Tools | 9 | tokscale (1.7K) |

| Domain Applications | 8 | DashClaw (204) |

| Guides & Docs | 5 | awesome-hermes-agent (898) |

| Forks & Derivatives | 3 | hermes-agent-camel (12) |

The full quality-filtered, security-reviewed, live-data-updated map is available at hermesatlas.com.

The most surprising entries — to me, at least — are the cross-pollinations. Hermes-android puts Hermes on your phone via a Python bridge. Hermes-blockchain-oracle is a Solana intelligence MCP server. Hermes-embodied uses Hermes to fine-tune VLA robotics models on cloud GPUs. Hermes-mars-rover simulates Mars exploration with ROS2 and Gazebo. The fact that the ecosystem is already this diverse six weeks in suggests Hermes hit a real nerve.

8. What to watch for in Q2

Five things I'd watch closely over the next 90 days:

1. The skill marketplace network effect. Hermes ships 40+ bundled skills today. The skills hub (built into the CLI) browses agentskills.io, GitHub taps, skills.sh, and ClawHub. If the rate of community skill creation continues to accelerate, Hermes could have a more curated (smaller, but higher-quality) skill marketplace than OpenClaw's by mid-Q2. The wildcard: whether community skills meaningfully cross over from OpenClaw via the OGP (Open Gateway Protocol) federation that both projects support.

2. The multi-instance enterprise story. Hermes profiles let you run isolated agents per client/project on the same machine. If a few high-profile companies start using this for internal automation (DM Slack bot for engineering, separate Telegram bot for sales, all sharing infrastructure), Hermes becomes a real competitor to enterprise agent platforms. Watch for Hermes to ship per-tenant memory isolation and audit logging in v0.9 or v0.10.

3. The self-improvement narrative becoming measurable. Right now, "the agent that grows with you" is a tagline backed by anecdotal evidence. If Nous Research publishes benchmark numbers showing measurable improvement on repeated task families over time — real numbers, not vibes — Hermes becomes impossible to ignore.

4. The Nous Portal subscription economics. Nous gives away free tier access with promotional codes (AGENTHERMES01) and free MiMo v2 Pro for two weeks. At some point this has to convert to a sustainable subscription business.

5. The "first deletion" moment. Every fast-growing open-source project eventually faces a moment where its community has to decide what to remove from the core. Hermes is approaching this: 47 built-in tools, 14 platforms, 6 backends, 8 memory providers. At some point, things will need to move out of core into optional plugins. How Nous handles this transition — and whether the community trusts them with it — will define the next phase.